AI TRISM: The Complete Guide to Trust, Risk, and Security Management in AI

Explore AI TRiSM for trust, risk, and security management in AI. Learn how to operationalize AI TRiSM for secure, compliant AI systems.

Bhagyashree

As AI becomes significant part of decision making process across different industries, the risks related to bias, data privacy and compliance can grow at increasing rate. To counter these, AI TRISM framework was created. It is a comprehensive framework focuses on transparency, responsibility and inclusivity as the pillars for building trust, mitigating risk and to ensure the security of AI systems. As per AI TRISM recent report, it indicates that by the end of the year, security teams that focus on operationalization of AI trust, transparency and security within their AI initiatives are expected to experience a 50% increase in AI adoption, goal attainment and user acceptance.

Without a powerful framework like AI TRISM, security teams could risk significant damage. Hence AI TRISM not only helps in defense mechanism but also allow responsible and trustworthy development of AI tech.

This blog deep dives into AI TRISM and How it benefits AI development and overall security.

What is AI TRISM?

Artificial Intelligence trust, risk, security management or AI TRISM is a framework which is created to validate and verify that AI systems are safe, reliable and compliant with ethical standards. According to Gartner “AI trust, risk and security management (AI TRISM) ensures fairness, trustworthiness, governance, reliability and data protection in AI deployments”. It prioritizes on controlling the risks associated with AI, like bias, transparency, data privacy and compliance and it also establishes process to create and preserve confidence in AI systems.

AI TRISM assists enterprises in implementing AI solutions that are not only efficient but reliable by adopting governance, risk management and security into AI lifecycle. In current scenario of AI dominant era, the significance of AI TRISM cannot be emphasized enough. The chances of AI systems being misused also rise as these systems gets important in decision making process across different sectors. To lower the risks and enable security, accountability and transparency in AI deployments, AI TRISM offers an systematic method. This framework mandates organizations to maintain regulatory compliance, stakeholder confidence and uphold security in the AI dominant era.

Why is AI TRISM Important?

AI TRISM framework provides unifies approach as it brings together the most crucial parts of multiple important frameworks for holistic management of AI technologies. This framework is considered important because it helps mitigate risks and cyberthreats related to advancement and growing utilization of Gen AI like Large Language Models. The key advantage of AI TRISM is in the applications in areas like finance and healthcare which includes remediation, improved measures for model monitoring and protection against cyber threats and unauthorized access.

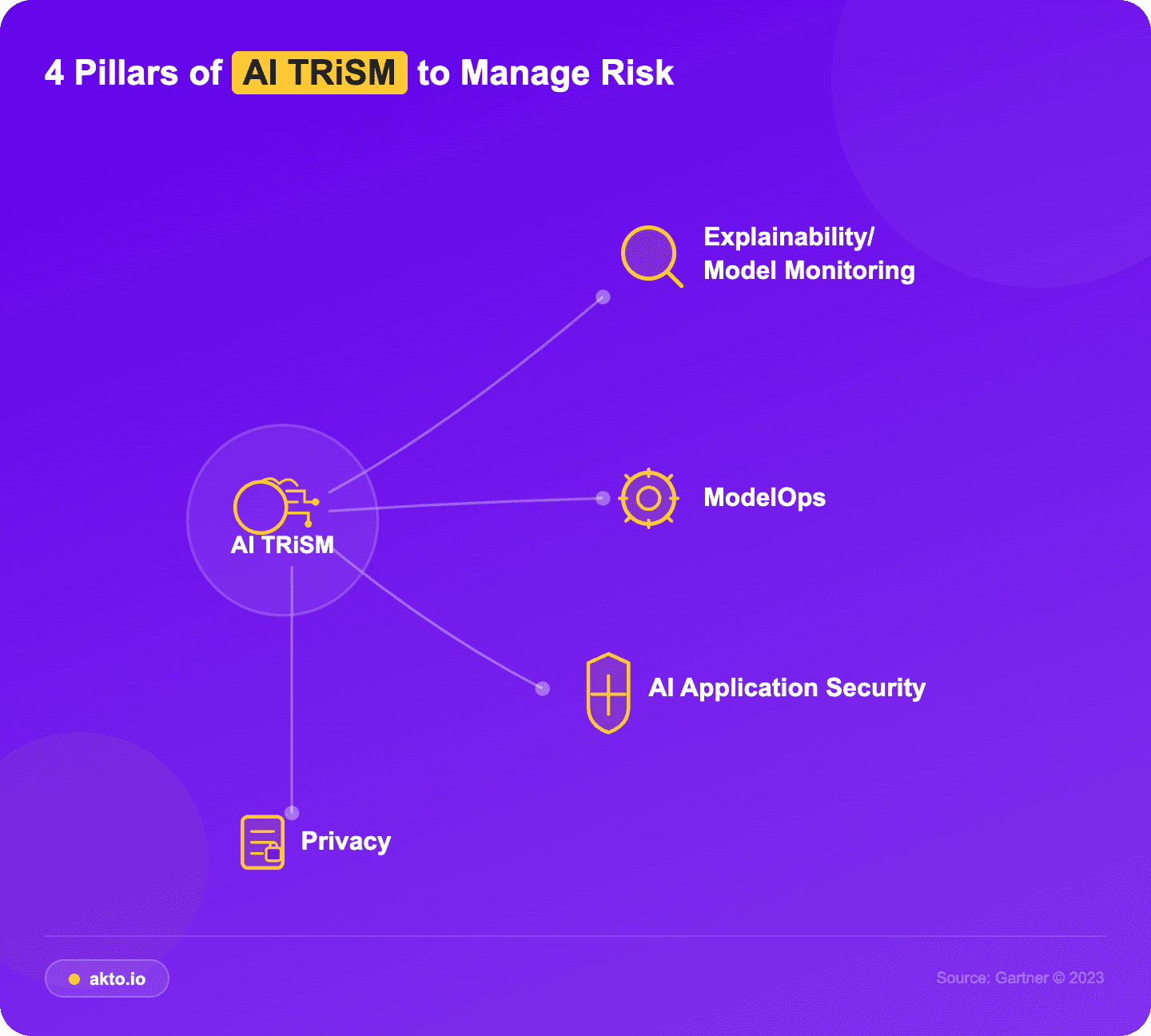

What are the 4 Pillars of AI TRISM?

AI TRISM comprises of 4 pillars. Implementing these pillars enables organizations to develop function, trustworthy AI systems and help prevent security risks. Here’s a breakdown of each of the pillars.

Image source: Gartner

Explainability

A significant part of AI development is understanding how a model processes the data, especially for high stakes application which needs responsible AI practices. Security teams should be able to make decisions on what data it uses, why it requires that information. It is important because it keeps system accountable which allows users and stakeholders to trust. If the security teams can comprehend on how it can reach conclusion, they can depend on its outputs. In case of “black box” process where it can be sure if its accurate.

Without explainability, it indicates a significant risk in AI models as it makes challenging to debug and underestimate the trustworthiness of AI. Furthermore, its a legal and regulatory liability, if users cannot trust it, they are less likely to adopt it.

Model Ops

The model operations advises both manual, automated performance and reliability management for AI models. It recommends maintaining version control over the models to track changes and issues during the development along with robust testing during every stage of the model lifecycle to ensure consistency. Besides this, periodic retraining keeps the model up-to-date with new data to preserve accuracy and relevance. These processes enable organizations to simplify and scale AI operations to match changing business needs.

AI App Sec

AI applications face numerous threats that needs a distinctive approach to security, which is known as AppSec. For example, cyber attackers may corrupt the input data to undermine model training, which could result in false or incorrect outcomes. AI AppSec protect against these risks by strictly implementing encryption of model data at rest and in-transit, and implement access controls around AI development systems. Furthermore, it enables security in all areas of AI development supply chain to allow trustworthiness which includes tooling, hardware and software libraries.

Data Privacy Risks

All models are trained on data and some of them can be confidential information. For instance, if you trained your AI model on customer information from CRM, it is protected under data privacy laws. As per regulations, security teams are required to inform the customer in case it is used or stored for training AI models. They should also be informed about how long their information will be retained and for what is it being used.

Another requirement is that these information should be strictly protected, so that only the authorized can view the information. Without proper security, a generative AI model is prone to leak sensitive information with proper prompts.

Furthermore, data privacy requirements are obligated to be followed by security teams. This compliance needs understanding on which regulations should apply to their operations. For instance, businesses that cater to customers worldwide must strictly follow certain laws like GDPR in the European Union (EU) which applies to individuals who were in this particular region when data was collected. Other regulations like CCPA protects only the users residing in California.

Some of the regulations need opt-in consent, where explicit permission is required to collect data while others are required to follow opt-out model that puts responsibility on users. These laws are required for security teams to have valid reason for get personal data and impose restrictions on the scale of such data. Besides this, they also demand a clear plan for disposing or deleting the information once the purpose is fulfilled. Furthermore, security teams must display that they have proper security measures to secure data and maintain proper documentation of user consent.

Overall, AI TRISM came into existence because organizations were seeking one unified framework that could thoroughly understand AI risk and control its inputs and outputs at runtime.

How Does AI TRISM Work

AI TRISM functions by systematically organizing the controls that govern AI systems into one structured model. It combines governance expectations, data protections and runtime evaluation so that they can be applied consistently across different systems such as agents, models and applications. It creates a single place to define how AI should work and how its behavior should be evaluated. The AI TRISM framework makes use of 3 primary approaches.

Clearly Documenting the AI systems

Organizations document the process of AI systems, the data they depend on and the conditions on which they should be used for. This information defines what the anticipated behavior looks like.

Alignment

Data policies, governance rules and acceptable use requirements are aligned so that they are not managed in separate silos. This alignment ensures that controls in other layers consists of information that is needed to analyze the activity properly.

Implementation

TRISM connects rules, documentation and evaluation criteria to the point in environment where AI interactions happen. This approach lets AI process to be evaluated using the policies that were defined earlier and escalate when something looks way risky.

Operationalizing AI TRISM

Operationalizing AI TRISM means adding risk, trust and security controls directly into how the models behave, communicate and make decisions in real time. By blending automatic red teaming, adversarial testing, continuous security validation and dynamic guardrails, companies can form static compliance to adaptive, always on AI security that can grow with evolving AI environments.

here’s how teams can operationalize AI TRISM:

Automated AI Red Teaming

Simulate real-world attacks such as misuse scenarios, prompt injection and data leakage by using automated agents to effectively identify weakness in AI systems.

Move from Static Policies to Runtime Enforcement

Shift ahead of documentation and audits by integrating security controls directly into live AI interactions which ensures policies are enforced during execution and are not just defined.

Adversarial Testing for LLMs

Test models with ambiguous, malicious, and ambiguous edge case inputs to dig vulnerabilities deep down in reasoning, context handling and safety alignment before attackers exploit them.

Regular Security Testing

Embed automated security checks into CI/CD pipelines and runtime environments so that all models update, change prompt or workflow modification is validated instantly.

Guardrails Implementation

Implement guardrails that filters input, validate outputs and implement compliance policies in real time, ensure safe and proper AI behavior.

Policy Implementation in Agent Workflows

Implement access control, data protection rules and action level validations across multiple autonomous agents that interact with APIs, systems and tools.

Closed Loop Feedback

Continuously offer insights starting from testing, runtime monitoring to policies and guardrails which enables adaptive and changing AI security matched with TRISM principles.

How Can AI TRISM be Applied For Different Systems

AI TRISM applies its controls differently depending on whether the system is a model, application or an agent, because each one of them behaves differently. The risk looks different and the enforcement points do as well. Here’s a breakdown on how this framework can be applied on systems.

Applications

Applications connects the users to models and pulls information from other systems. Which means they can create different risks. Applications could leak excess data if permissions not strictly controlled or if the context windows pull from overshared content. Runtime inspection inspects what the application returns and retrieves. It adds access controls, classification and output validation.

Models

Models get inputs and process outputs and remain underneath the application layer. TRISM scrutinizes those inputs and outputs before formatting or sent to user. For instance, it evaluates for any data extraction attempts, prompt injections or outputs that falls outside a model’s intended use. It also checks for harmful or malicious behaviors like hallucinations or unsafe responses. The layer aims for safety, model correctness and prevention of misuse.

Agents

Agents are used to run sequences of specific actions. They call tools and make decisions that could chain into more actions. TRISM verifies if these actions match the agent’s intended scope. It also monitors unexpected behaviors. For instance, when an agent all of a sudden attempts to access the systems outside its intended workflow, the system restricts the actions or can redirect it for evaluation. Agents require proper alignment checks, stringent monitoring and real-time enforcement because their behavior keeps fluctuating.

Use Cases of AI TRISM

Here are use cases that highlights the potential of AI TRISM. These two use cases shows how businesses started using AI TRISM to create innovation, improve outcomes and value for society and businesses.

Use Case 1

Danish business authority were looking to ensure their AI models are transparent, fair and accountable. So, they designed a process to implement strong ethical standards. To build this, DBA connected its ethical principles to concrete actions like:

Establishing a model monitoring framework.

Regularly checking model predictions against the fairness tests.

They use these strategies to deploy and maintain 16 AI models that monitor huge financial transactions. These strategies helped DBA to validate that their AI models complied with standards and built trust with stakeholders and customers.

Use Case 2

AI TRISM ensures safety, accuracy and reliability of AI technology to be applied in treating and assessing patients. Patient’s information is protected as private and must be kept confidential, such that decisions taken by AI should be ethical and trustworthy.

Pfizer uses AI TRISM while developing new medicines to protect the security of patients while making sure that it is done correctly utilizing the AI. AI Technology management like this ensures security and efficiency. Hence, they reduce the number of errors that could occur when developing new medicines.

Best Practices to Maximize the Effectiveness of AI TRISM

Implementing AI TRISM works best when it starts small and can grow in exponential steps. The goal is not deploy controls at once. It is to create a foundation that makes the rest of framework effective.

Begin by Visibility

It is challenging to manage unseen risk. Most of the organization do not give much importance to their AI footprint which includes shadow tools, experiments and third-party integrations. Start by building a centralized AI inventory that monitors all the deployments. Add all the technologies in use, AI-powered applications, underlying data sources and integration points which ensures full visibility into AI ecosystem and remove blind spots.

Solidify Governance

Solve the data layer before focusing on the AI layer. A weakly classified data breaks TRISM because the runtime controls and it cannot implement rules if the permissions are not proper. Begin by improving the classification, cleaning up the access and reduce broad exposure.

Discovering the AI

Many companies have models, agents and applications scattered across the teams. They must be inventoried and documented on how they work. Also they should be initial risk score so high risk systems so that they are prioritized first.

Create an AI Catalog

The catalog takes charge of TRISM because it is capable of organizing AI entities, owners and the data they depend on. It defines what normal behavior of AI should be. This context is required for runtime implementation to enable safer decisions.

Deploy Runtime Inspection

Start small with the systems that focuses on sensitive data or external AI services, as it keeps enforcement manageable and prevents slowing teams down. Besides this, the runtime controls analyze each interaction, generate blended risk scores, block or escalate risky behavior.

Properly Align with Responsibilities

TRISM is widespread across data governance, legal, AI engineering, compliance, security and business. So, every selection path must be clearly and carefully defined so that the runtime tasks reach the relevant team without hassle or confusion.

Final Thoughts on AI TRISM

AI TRISM has significantly changed into essential for governing agentic AI systems. As AI becomes more autonomous and MCP-driven, risks add up in real time, makes static policies and periodic audits ineffective. Security teams need to shift towards testing, visibility and runtime enforcement to ensure AI systems which remains secure, compliant and trustworthy. The importance of AI TRISM present in its ability to move ahead of governance frameworks and function as a living and flexible security model.

Akto lets this transition by operationalizing AI TRISM principles within actual environments. It implements policies at runtime by monitoring AI interactions and applies guardrails to prevent data exposure and exploitation. It also offers continuous agent discovery, map AI agents, MCP and integrations to various tools to various security risks.

Watch Akto’s Agentic AI security and MCP security in action. by Book a Demo today!

Experience enterprise-grade Agentic Security solution