15 Best AI Security Tools in 2026 to Secure AI Agents, GenAI & LLMs

Compare 15 best AI security tools in 2026. Learn advantages, limitations, and top criteria for choosing tools to secure AI agents, LLMs & MCP systems.

Kruti

AI is now part of how software gets built, deployed, and operated. That shift has created a new category of risk that traditional security tools were not designed to handle.

Prompt injection, shadow AI, model misuse, and data leakage through LLM interfaces are all real threats that most legacy scanners miss.

These emerging threats have given rise to dedicated AI security tools.

According to Aikido's 2026 State of AI in Security report, one in five organizations has already experienced a serious security incident tied to AI-generated code.

In this blog, we cover the best AI security tools in 2026, broken down by use case, so you can match the right platform to your actual threat surface.

What Are AI Security Tools?

AI security tools are software platforms built to protect the AI systems your organization runs and the way your teams use AI day to day.

Securing AI systems means protecting the models, pipelines, and infrastructure you build on. This includes LLM APIs, vector databases, RAG pipelines, and any application that takes user input and passes it to a model.

Threats can range from prompt injections to data poisoning and model theft to jailbreak attempts.

Meanwhile, AI security tools also help secure AI usage by governing how employees use AI tools within the organization. Also referred to as shadow AI, it helps teams that have adopted tools like ChatGPT, Copilot, and other tools that handle sensitive enterprise data.

Within these two categories, AI tools for security fall into four functional types:

Runtime security tools monitor AI applications in production. They catch prompt-injection attempts, jailbreaks, and data extractions in real time.

Red teaming tools simulate attacks against your AI systems before they go live. They probe models for harmful outputs or policy violations so you find the issues before attackers do.

Governance and compliance tools give security and legal teams visibility into what AI is being used across the enterprise for, what data it accesses, and if usage aligns with internal policy or regulatory requirements.

AI posture management tools scan your environment to find all AI assets, flag misconfigurations, and give you a continuous risk score across AI usage points.

The best AI security tools often combine more than one of these functions. But knowing which category your biggest risk falls into helps you get started on the right note.

Why are AI Security Tools Important?

In AI systems, a model's output depends on the input it receives, the context it has access to, and the instructions it has been given, none of which are fully predictable, thereby ruling out the need for traditional security tools.

Here’s what can go wrong without the right tools in place:

Prompt injection: One of the most exploited attack categories in AI applications. An attacker embeds malicious instructions inside user input or an external data source. The model reads it as a legitimate instruction and acts on it, bypassing guardrails and other security systems in place.

Data leakage: Sensitive information passed into an LLM can get exposed in outputs that external users were not intended to see. Without controls, you have no visibility into what data leaves your environment and where it goes.

Shadow AI: This is currently a major enterprise security problem, as AI tool usage has spiked. Developers and business users adopt AI tools without going through a security review. Credentials, customer data, internal code, and logic get pasted into consumer AI products.

Unsafe tool execution: A single bad prompt can trigger destructive or unauthorized actions and cause devastating effects on the overall infrastructure.

AI agent overreaching: Autonomous agents are designed to take multi-step actions with minimal human input. Without proper tools and runtime controls, an agent can exceed its intended role and make decisions that should require human approval.

Governance issues: The EU AI Act, emerging NIST AI RMF guidance, and internal compliance requirements all demand documented evidence of how AI systems make decisions, what data they were trained on, who has access, and how risks are being managed.

Runtime policy enforcement: Runtime enforcement means having controls that detect and block policy violations the moment they occur. It closes the gap between what your AI system is supposed to do and what it actually does in production.

Model supply chain risk: The models, datasets, and third-party plugins that the application depends on can all be part of an attack. A poisoned model or a compromised plugin can introduce backdoors that are extremely difficult to detect through traditional code reviews.

How We Evaluated the Best AI Security Tools

We looked at what actual security teams are using across enterprise environments and evaluated based on the following criteria:

Coverage across the AI attack surface: We prioritized platforms with meaningful coverage across at least two or more of the core risk categories, like application security, runtime protection, red teaming, posture management, and governance.

Detection quality: We looked at how tools handle false positives, whether they provide exploitability context, and whether findings map to actionable remediation steps rather than generic advisories.

AI-native architecture: We prioritized purpose-built AI-native tools over tools or platforms that just tag an “AI” label onto their regular testing engines.

Developer and security team usability: We considered how well each platform integrates into existing CI/CD pipelines, workflows, and security operations processes.

Enterprise readiness: For organizations evaluating AI security tools, deployment flexibility, role-based access controls, and audit logging are baseline requirements.

Community and third-party validation: Considered external review platforms like G2 and Gartner Peer Insights for direct user feedback.

Comparison Table of the Best AI Security Tools

Here is a side-by-side comparison of the top 15 AI security tools covered in this blog:

Tool | Category | Key Capabilities | Best For | Pricing |

|---|---|---|---|---|

Akto | Agentic AI Security + API Security | AI agent discovery, MCP security, LLM red teaming, runtime guardrails, OWASP LLM Top 10, CI/CD integration | Teams securing AI agents, MCP workflows, and LLM-powered APIs in production | Free tier available; paid plans on request |

Palo Alto Networks | AI-Powered SOC Platform | Threat detection, incident investigation, AI Access Security, cloud and network protection | Large enterprises running complex SOC operations on existing Palo Alto infrastructure | Quote-based |

Lasso Security | LLM Gateway + Runtime | Secure LLM gateway, real-time prompt and output monitoring, data masking, browser and app integrations | Orgs deploying customer-facing GenAI needing data flow control and compliance | Quote-based |

Cato Networks | AI-SPM + Runtime | AI asset inventory, AI firewall, prompt injection detection, shadow AI governance, compliance controls | Enterprises deploying public or private AI tools that need full-lifecycle security | Quote-based |

Mindgard | AI Red Teaming | Automated adversarial testing, model vulnerability assessment, CI/CD integration, continuous risk scoring | Security teams stress-testing AI systems before and after deployment | Quote-based |

Lakera LLM Guard | LLM Runtime Security | Prompt and output interception via API, prompt injection blocking, data leakage prevention, and low-latency architecture | Enterprises deploying chatbots or custom AI agents needing real-time guardrails | Quote-based |

AI/ML Security Posture | Model scanning, pipeline security, red teaming, supply chain risk, and 1runtime monitoring | Enterprises securing the full AI/ML lifecycle from build to production | Quote-based | |

Noma Security | AI-SPM + Runtime | AI asset discovery across models and SaaS, runtime protection, compliance workflows, and agent behavior monitoring | Companies needing full AI visibility and runtime protection across the entire AI lifecycle | Quote-based |

Radiant Security | Agentic SOC Automation | AI-driven alert triage, automated investigation, identity, cloud, and endpoint coverage, human-level reasoning | Security teams looking to reduce alert fatigue and accelerate incident response | Quote-based |

CalypsoAI | Runtime + Red Teaming | Agentic red teaming, real-time inference defense, observability across LLMs and agents, SIEM/SOAR integration | Enterprises scaling GenAI across multiple models, agents, and tools | Quote-based |

Cranium | AI Governance + Supply Chain | AI and ML asset inventory, model and pipeline testing, supply chain risk, compliance workflows | Enterprises needing security testing and visibility across internal and external AI systems | Quote-based |

Securiti | AI Governance + Data Security | AI model discovery across cloud and SaaS, shadow AI detection, data cataloging, GDPR/CCPA compliance | Orgs needing comprehensive AI governance across multi-cloud and hybrid environments | Quote-based |

BigID | AI Data Governance | AI TRISM, model inventory, data security controls, native mapping, and regulated industry compliance | Finance, healthcare, and manufacturing teams deploying AI across sensitive data environments | Quote-based |

Obsidian Security | SaaS + AI Agent Security | GenAI tool and extension discovery, prompt security policies, AI agent inventory, SaaS governance | Enterprises securing SaaS apps and managing AI-based access and integration risks | Quote-based |

CrowdStrike Falcon | Agentic Security Platform | AI threat detection, endpoint, cloud and identity protection, agentic SOC workflows | Enterprises needing unified detection and response across the full stack | Quote-based |

15 Best AI Security Tools in 2026

Below is a detailed breakdown of our top 15 AI security tools to consider:

1. Akto

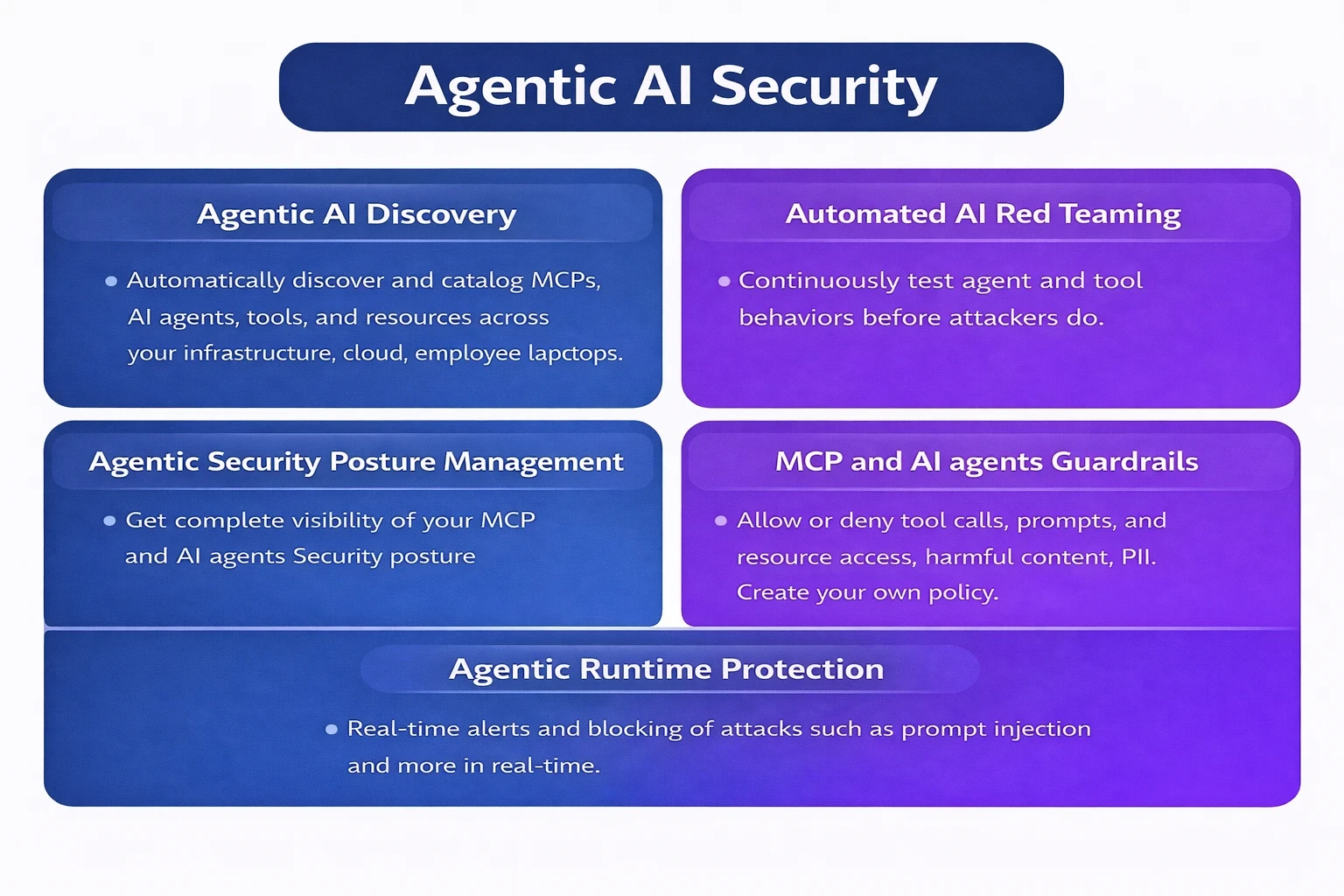

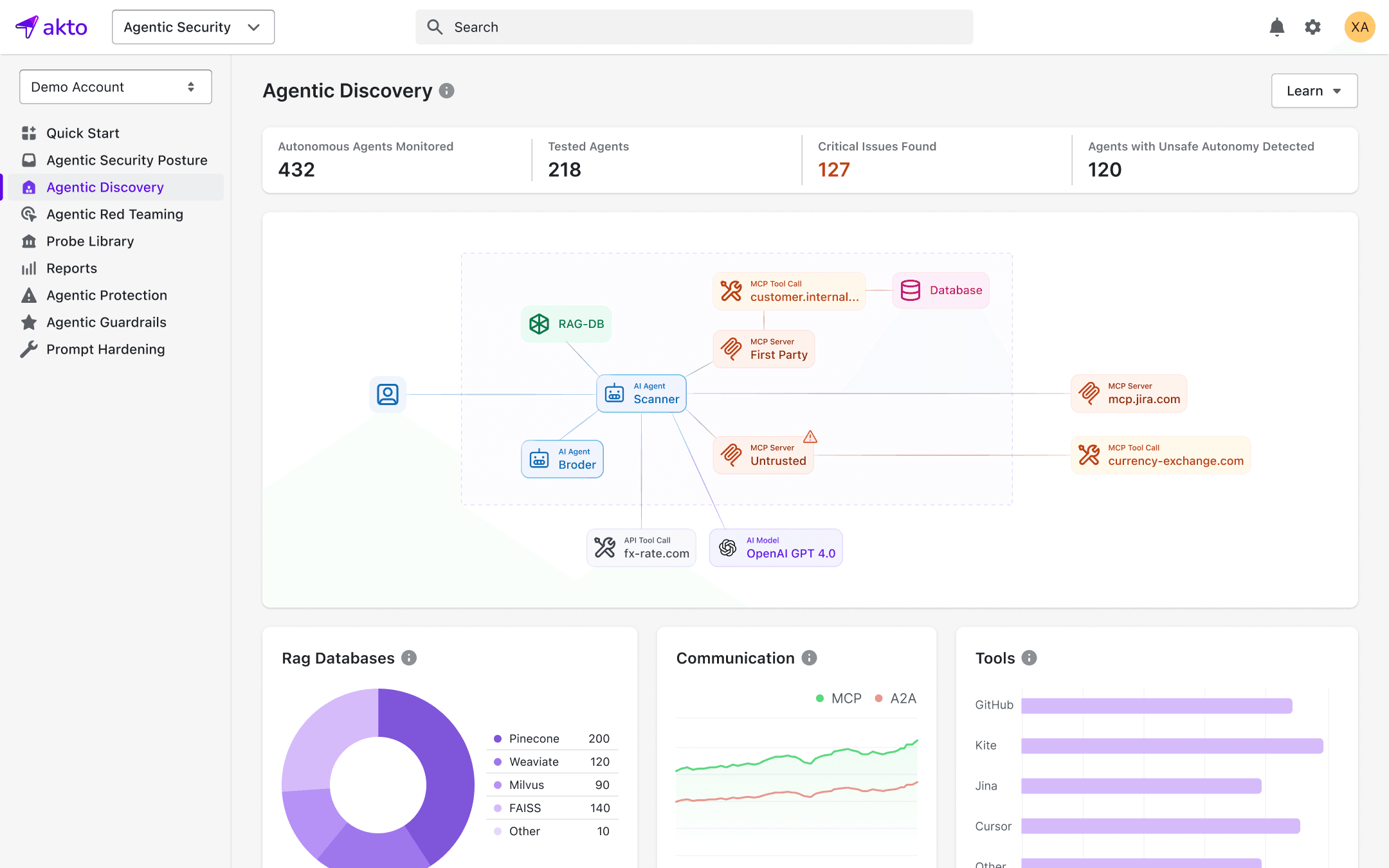

What it is: Akto is an Agentic AI Security platform for enterprises needing an extensive solution to secure AI agents, MCP servers, and GenAI applications across the full lifecycle: discovery, continuous red teaming, and runtime enforcement.

Akto maps every AI agent, MCP tool, and agent-to-system interaction across cloud infrastructure and employee endpoints, giving security teams complete visibility into what agents exist, what they can access, and what actions they can take.

Best for: Enterprises deploying MCP servers, AI agents, and autonomous workflows in production that need deep visibility, continuous automated red teaming, and runtime guardrails without slowing down development velocity.

Key strengths:

Automatically discovers and catalogs AI agents, MCP endpoints, tools, and resources across cloud and endpoints, including shadow resources

Continuously runs adversarial red teaming simulating prompt injection, tool poisoning, line jumping, context leakage, and unsafe agent behavior

Enforces inline guardrails with allow/deny controls on prompts, tool calls, and outputs at the execution layer

Runtime protection detects and blocks threats in real time

Integrates into DevSecOps pipelines for continuous inventory, runtime monitoring, and security testing across agentic systems

Limitations:

Primary focus in agentic AI and MCP security

Relatively new in the market

Why it made the list Akto is one of the few platforms purpose-built for the agentic AI threat surface. It was designed from the ground up around MCP security, agent discovery, and runtime enforcement.

2. Palo Alto Networks

What it is: A comprehensive security platform that uses AI and machine learning to automate threat detection, investigation, and response across network, cloud, and endpoint environments.

Best for: Large enterprises running complex SOC operations, particularly those already using Palo Alto's existing network security stack.

Image Source: Palo Alto

Key strengths:

Unified data ingestion from multiple sources

AI-driven investigation automation

AI Access Security module for governing employee AI usage, strong cloud and network coverage

Limitations:

Requires significant existing investment to get full value

Expensive enterprise pricing

Why it made the list: One of the most comprehensive security platforms in the market with a dedicated and growing AI security layer that enterprise teams are actively adopting.

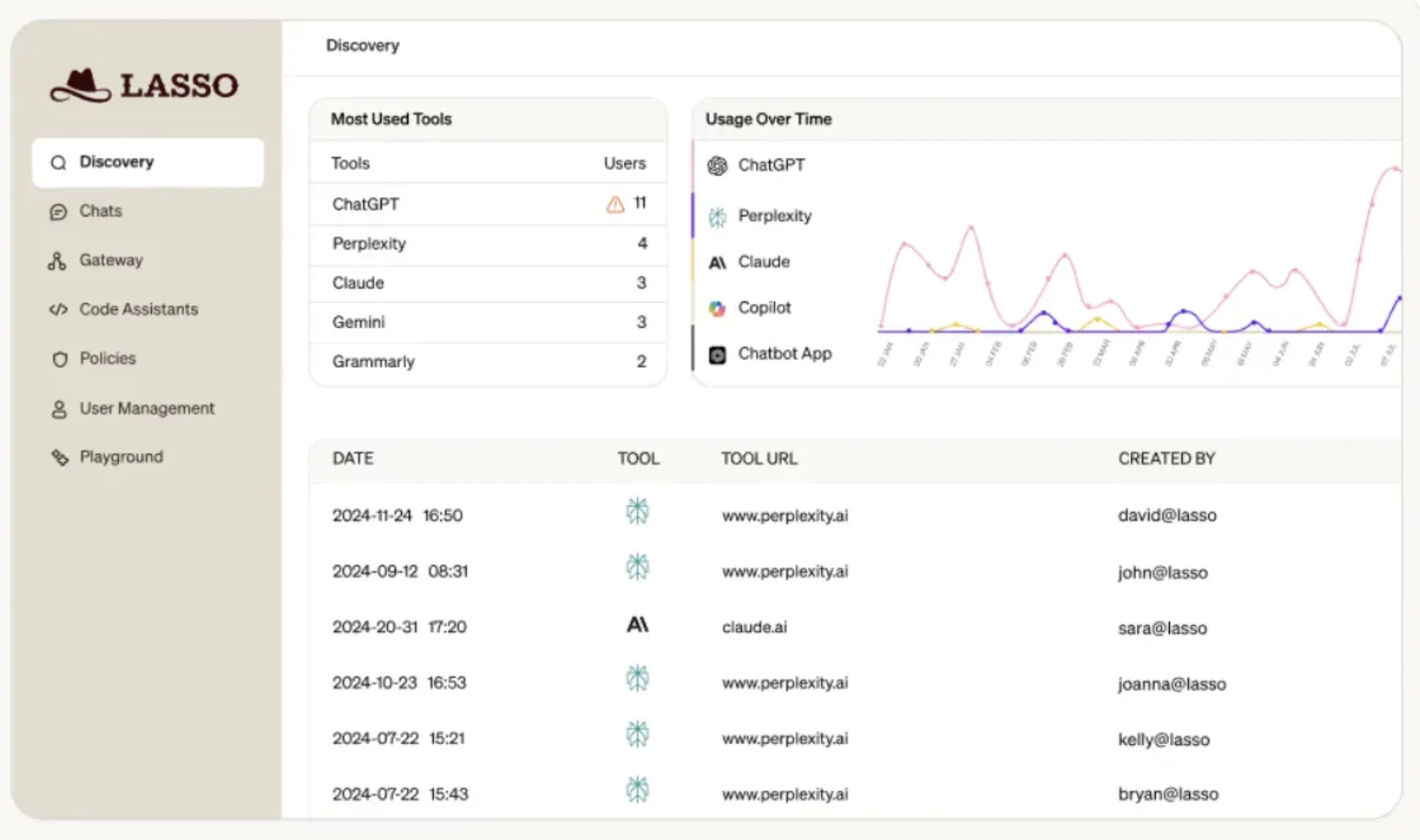

3. Lasso Security

What it is: An AI security tool purpose-built to secure enterprise GenAI and LLM usage through a secure gateway, real-time monitoring, and browser-level integrations.

Best for: Organizations deploying customer-facing generative AI that need data flow control, prompt and output oversight, and compliance coverage across SaaS and cloud.

Image Source: Lasso

Key strengths:

Secure LLM gateway, real-time detection of prompt injection and data leaks, traffic logging, and masking

Lightweight browser and app integration for shadow AI visibility

Limitations:

Narrower focus on the gateway and monitoring layer

Less coverage for agentic workflows

Why it made the list: Strong real-time enforcement capability at the LLM interaction layer, with practical integrations that make deployment fast for security teams without deep AI expertise.

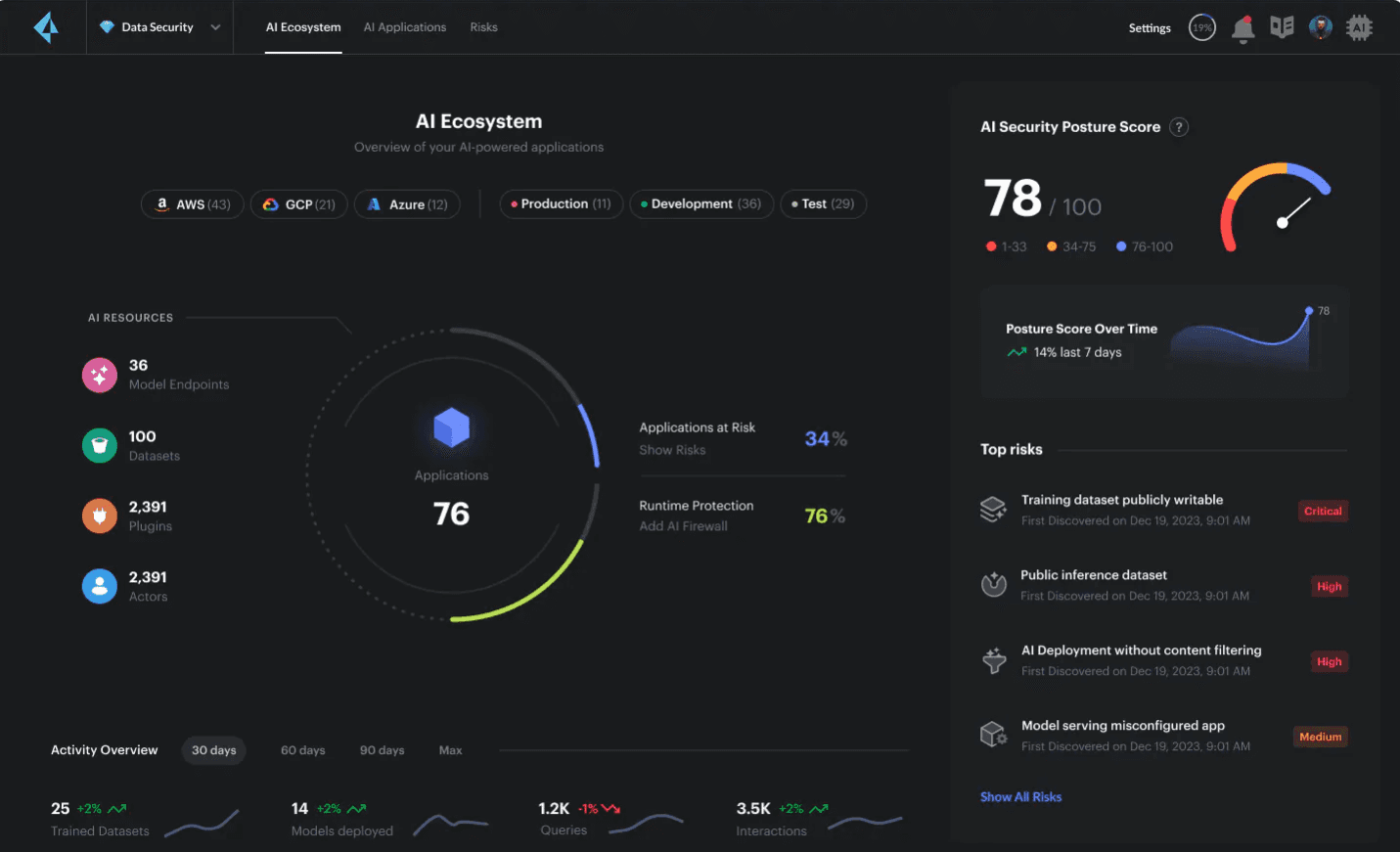

4. Cato Networks (Former AIM Security)

What it is: A unified AI security platform that combines AI-SPM, AI firewall, and runtime monitoring to secure organizations using both third-party and internally built AI tools.

Best for: Enterprises that deploy public or private AI tools and need full-lifecycle security, from asset inventory to runtime threat detection and compliance enforcement.

Image Source: Cato Security

Key strengths:

AI asset discovery and inventory

AI firewall for runtime protection

Continuous detection of prompt injection and data leakage

Limitations:

The rebranding from Aim Security to Cato Networks introduces some market confusion

Agentic AI coverage is still maturing

Why it made the list: One of the more complete AI-SPM offerings available, with runtime enforcement capabilities beyond basic monitoring.

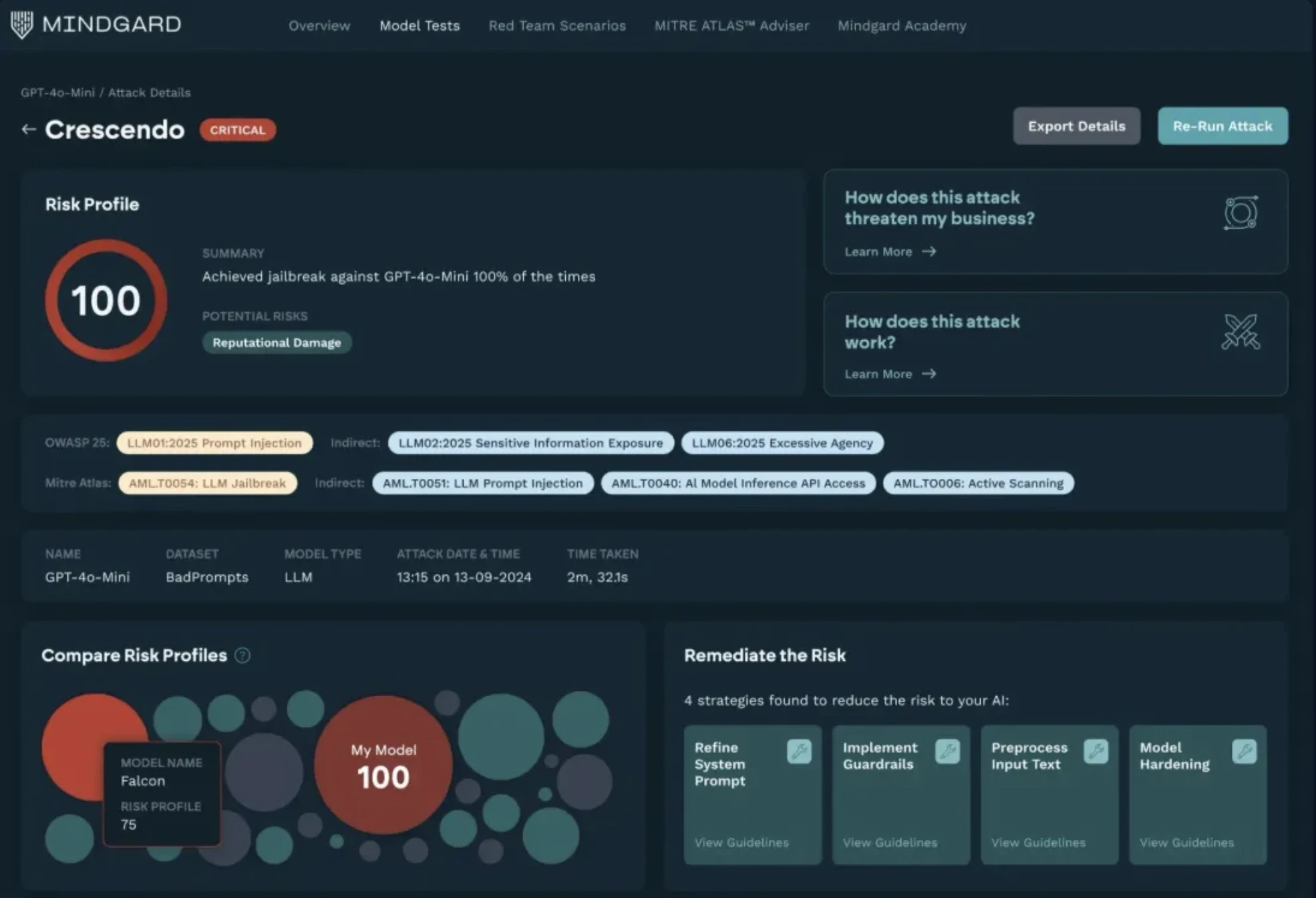

5. Mindgard

What it is: An AI red teaming platform that runs automated adversarial testing against LLMs, generative models, multimodal architectures, and custom agents.

Best for: Security teams that want to proactively identify AI-specific vulnerabilities before deployment or continuously in production.

Image Source: Mindgard

Key strengths:

Automated red teaming for prompt injection, model inversion, data poisoning, and evasion

CI/CD pipeline integration

Continuous risk scoring across AI models

Limitations:

Primarily an offensive testing tool

Teams will need separate platforms for runtime enforcement and governance

Why it made the list: Rare in-depth automated adversarial testing solutions.

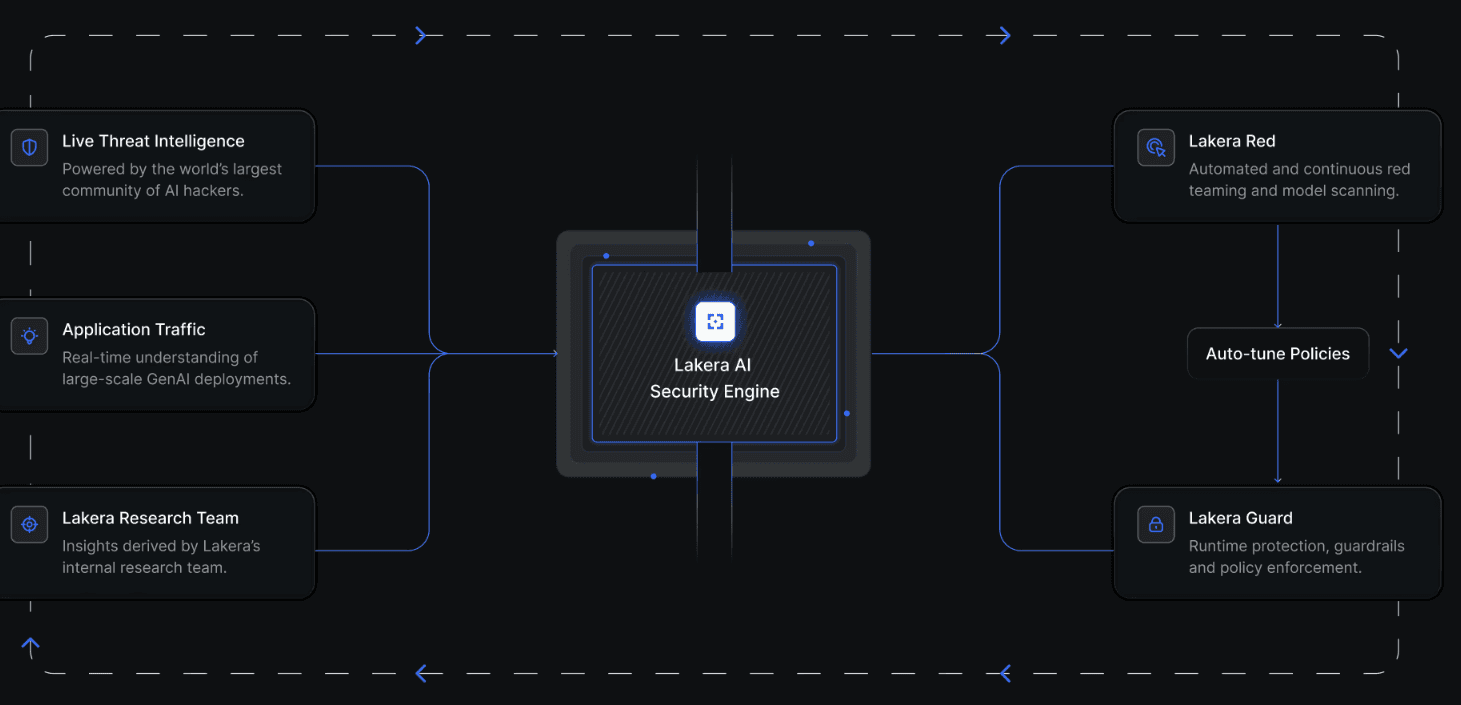

6. Lakera LLM Guard

What it is: A runtime security tool that intercepts LLM prompts and outputs via a single API call and applies real-time threat detection before returning or processing content.

Best for: Enterprises deploying chatbots or custom AI agents that need low-latency, real-time guardrails and content or data loss protection.

Image Source: Lakera

Key strengths:

Single API integration, prompt injection, and jailbreak blocking

PII and sensitive data detection in outputs

Multimodal LLM support

Built for high-volume deployments

Limitations:

Focused on the inference layer

Does not address model supply chain risk, governance, or broader agentic workflow security

Why it made the list: Fast to deploy, simple, and effective at the most common LLM attack vector.

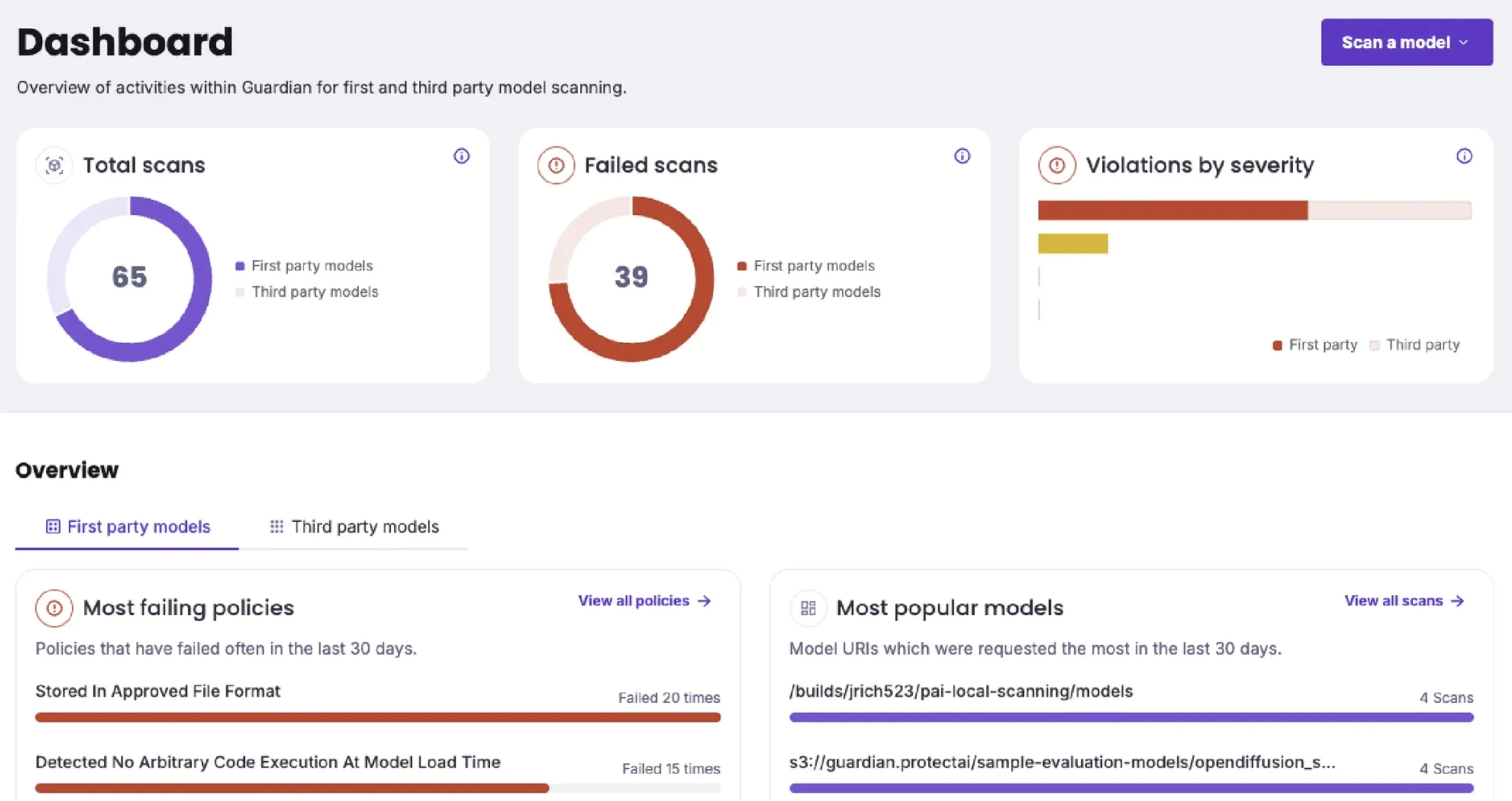

7. Protect AI

What it is: An end-to-end AI security platform that secures machine learning systems from development through production, through model scanning, testing, red teaming, and runtime monitoring.

Best for: Enterprises that need security coverage across the full AI/ML lifecycle, including development, evaluation, and production use.

Image Source: Protect AI

Model scanning and vulnerability detection

Red teaming capabilities

Supply chain risk management

Runtime monitoring for data exposure and unsafe model behavior

Limitations:

More ML-pipeline focused than agentic AI focused

Teams building with LLMs and MCP-based agents may find coverage gaps in newer agentic threat categories

Why it made the list: One of the broadest AI/ML security platforms available, with strong supply chain and model lifecycle coverage

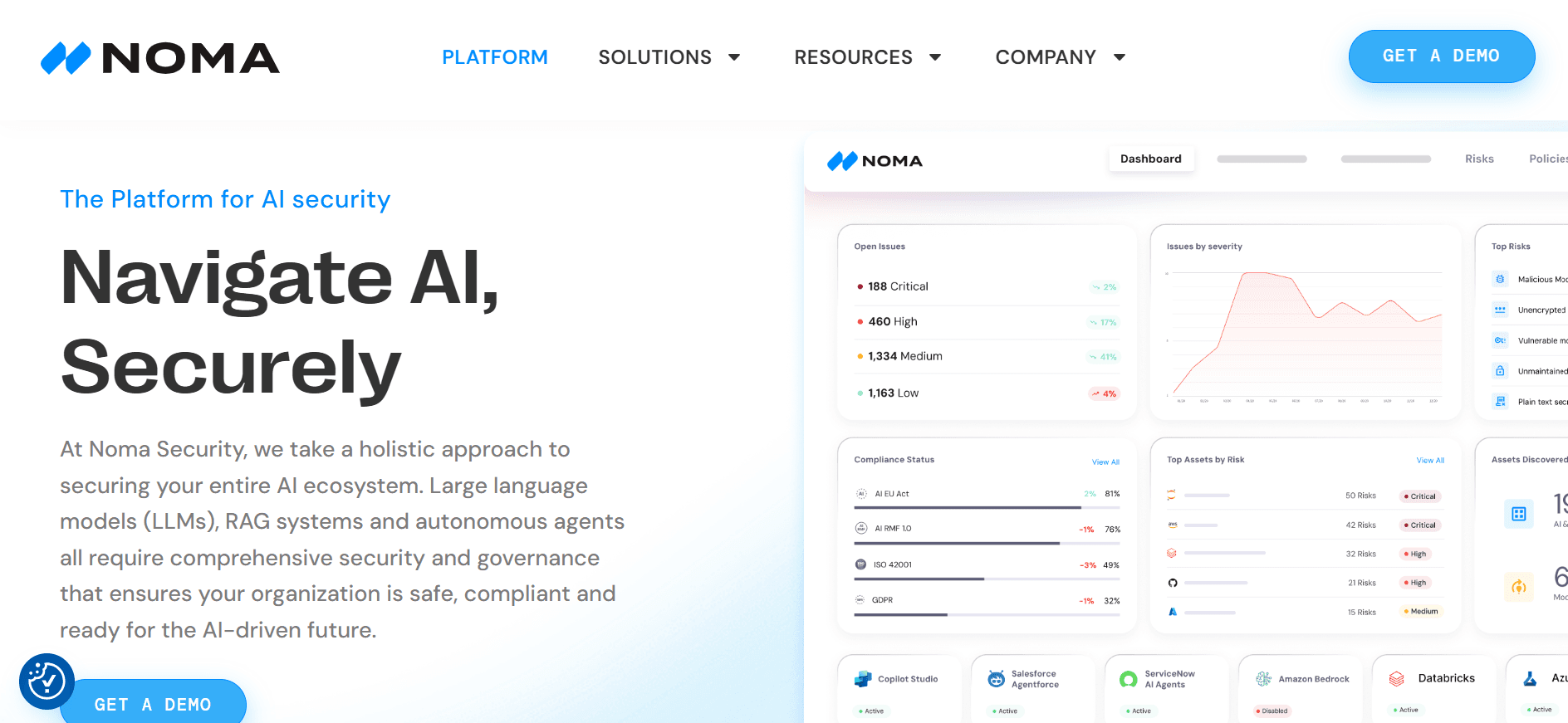

8. Noma Security

What it is: An AI security platform that provides posture management, runtime protection, and compliance workflows across models, SaaS applications, LLMs, data pipelines, and autonomous agents.

Best for: Companies that need full visibility into AI assets and runtime protection across the entire AI lifecycle, from shadow AI discovery to production monitoring.

Image Source: Noma AI

Key strengths:

AI asset discovery across cloud and SaaS

Runtime detection of prompt injection and model manipulation

Agent behavior monitoring

Compliance workflow support

Limitations:

Newer entrant

Enterprise deployment scale and long-term roadmap maturity are still being established in the market

Why it made the list: Noma covers more of the AI attack surface in a single platform than most tools at its stage, with particular strength in asset discovery and SaaS AI visibility.

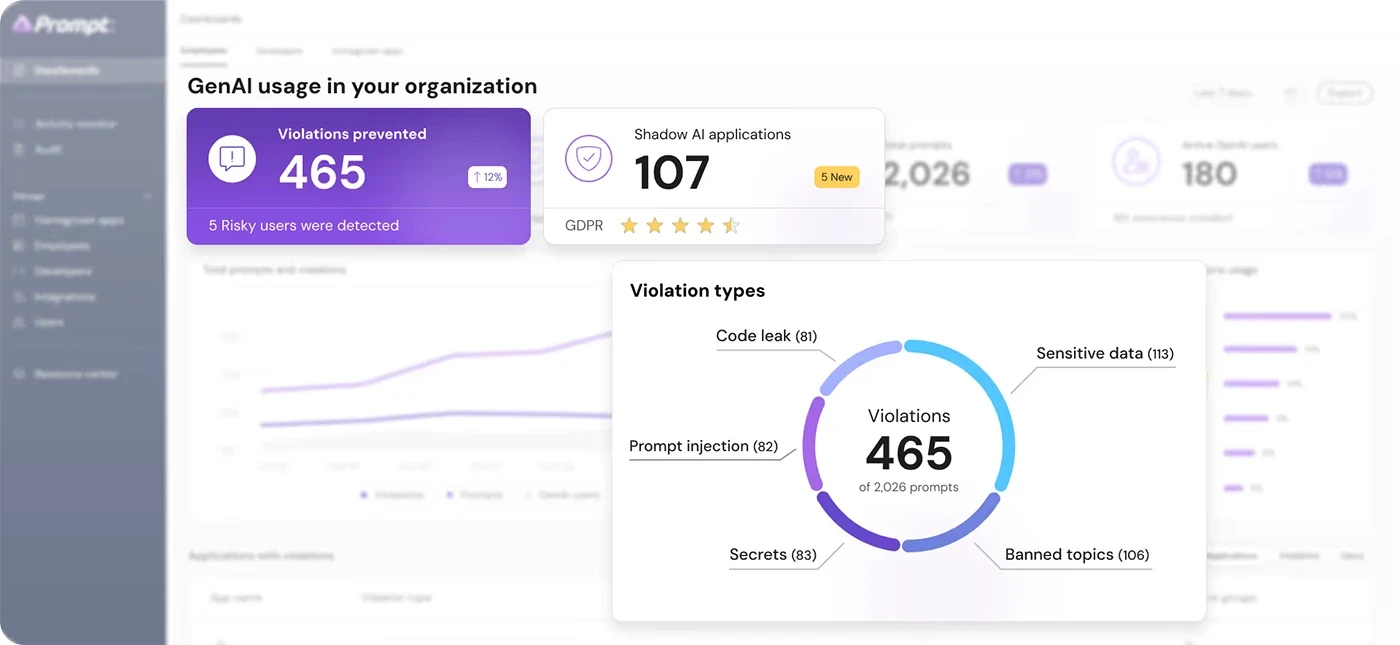

9. Prompt Security

What it is: A manipulation technique where attackers craft inputs that override or conflict with system instructions, causing a GenAI model to behave in unintended or unsafe ways.

Best for: Security teams and AI engineers building LLM-powered applications, agents, or copilots that interact with users and external data sources.

Key strengths:

Exploits natural language instead of traditional code vulnerabilities

Can influence both model outputs and downstream actions

Works across chatbots, agents, and RAG-based systems

Limitations:

Hard to detect using traditional security tools

No built-in hierarchy enforcement in most LLMs

Can appear as normal user input, making filtering difficult

Why it made the list: Prompt injection is one of the most critical GenAI security risks because it directly targets how models interpret context. As systems integrate with tools and workflows, a single injected prompt can shift behavior from safe responses to unintended actions without triggering traditional security alerts.

10. CalypsoAI

What it is: An enterprise AI security platform that secures GenAI applications and LLMs at inference time using agentic red teaming, real-time defense, and continuous observability.

Best for: Enterprises scaling GenAI deployments across multiple models, agents, and tools that need unified runtime security and compliance controls.

Image Source: Calypso AI

Key strengths:

Model-agnostic architecture

Agentic red teaming

Real-time protection against prompt injection and jailbreaks

Full observability across LLM interactions.

Limitations:

Smaller deployments may not justify the implementation overhead relative to lighter-weight runtime tools

Why it made the list: Strong combination of offensive and defensive capabilities in a single platform, with enterprise integrations that fit into existing security operations workflows.

11. Cranium

What it is: An AI governance and security tool that helps enterprises inventory, test, and secure their AI and ML ecosystems, including models, pipelines, datasets, and third-party AI dependencies.

Best for: Enterprises that need complete visibility into AI assets and security testing across the AI supply chain, particularly those with significant third-party AI dependencies.

Image Source: Cranium AI

Key strengths:

AI and ML asset discovery

Model and pipeline testing

Supply chain risk management

Compliance workflow support

Limitations:

Governance and inventory-focused

Teams looking for runtime enforcement or red teaming depth will need to include other tools

Why it made the list: AI supply chain risk is consistently underaddressed. Cranium is one of the few tools with serious capability, specifically in this area.

12. Securiti

What it is: An AI security and governance platform that uses AI to discover, categorize, and govern AI models and data across SaaS, public cloud, and private cloud environments.

Best for: Organizations needing comprehensive AI security, AI governance, and compliance across multi-cloud, hybrid, and SaaS environments.

Image Source: Securiti

Key strengths:

Automated AI model discovery across cloud and SaaS

Shadow AI detection

Compliance automation

Full multi-cloud coverage

Limitations:

Heavy governance focus

Less suited for teams primarily concerned with runtime threats or active red teaming

Why it made the list: Securiti addresses a real enterprise pain point where most organizations lack an accurate inventory of what AI models they are running or what data those models touch.

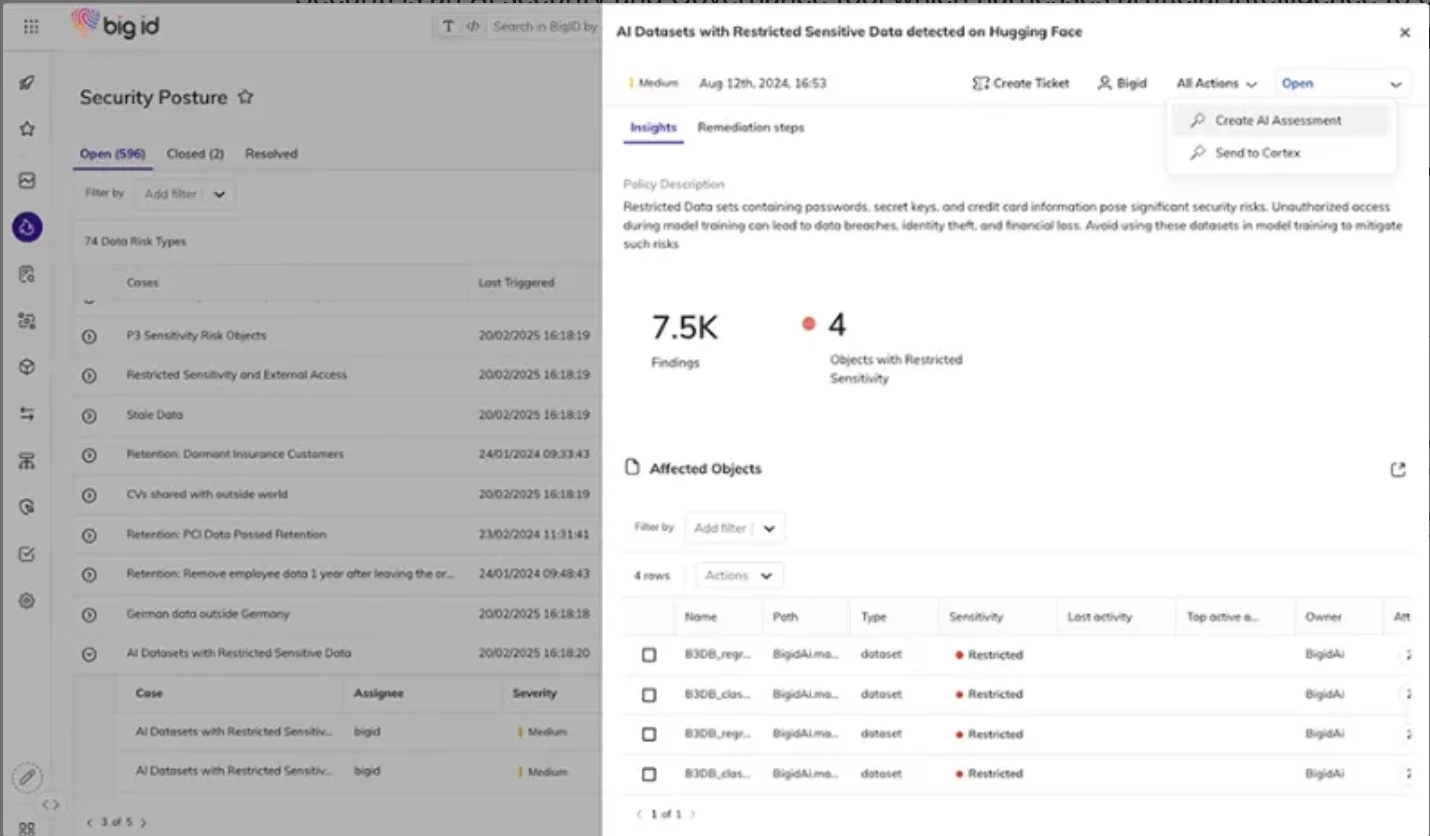

13. Big ID

What it is: An AI data governance and security platform with a dedicated AI Trust, Risk, and Security Management (AI TRISM) capability built for regulated enterprise environments.

Best for: Finance, healthcare, insurance, and manufacturing teams deploying AI across sensitive data environments where compliance and data lineage are crucial.

Image Source: Big ID

Key strengths:

AI model inventory

Native mapping for AI environments

EU AI Act and industry regulation alignment

Actionable risk prioritization

Limitations:

Data governance is the core strength

Teams looking for active threat detection or runtime enforcement against adversarial attacks will need additional tools

Why it made the list: Regulated industries have different AI security requirements than tech-native companies. BigID is one of the few platforms built with that compliance-first reality in mind.

14. Obsidian AI

What it is: A SaaS security platform that discovers and governs GenAI tools, browser extensions, and AI agents operating across enterprise SaaS applications and third-party integrations.

Best for: Enterprises that need to secure SaaS environments, manage AI-based access risks, and maintain governance over AI agents operating within business applications.

Image Source: Obsidian AI

Key strengths:

GenAI tool and extension discovery

Prompt security policies

Centralized AI agent inventory with SaaS connection mapping

Enterprise governance workflows

Limitations:

SaaS and identity-layer focused

Not designed for securing custom AI infrastructure, LLM pipelines, or internally built agentic systems

Why it made the list: Most enterprise AI risk today lives inside SaaS applications, not in custom-built infrastructure. Obsidian covers that layer with a level of specificity that broader security platforms miss.

15. CrowdStrike Falcon

What it is: An enterprise security platform that uses AI for threat detection and response across endpoint, cloud, and identity, with an expanding agentic SOC automation layer.

Best for: Enterprises that need unified detection and response across the full security stack, with AI-accelerated investigation and response built into existing workflows.

Key strengths:

AI-powered threat detection at scale

Endpoint and cloud coverage

Agentic SOC workflows

Broad ecosystem integrations

Limitations:

AI security features are embedded within a broad platform

Not purpose-built for LLM or agentic AI-specific threats

Best suited for teams already in the CrowdStrike ecosystem

Why it made the list: CrowdStrike's scale and breadth of deployment mean its AI threat detection capabilities operate with data advantages that pure AI security startups cannot match yet.

AI Security Features to Look For

Now, if you’re overwhelmed with the above AI security tools list, below is a breakdown of the top features you must look for in an AI security tool or platform:

AI Agent and MCP Security

AI agents and MCP servers are the newest and least secured part of most organizations' AI stack. Look for tools that automatically discover agents and MCP endpoints, map what tools and resources each agent can access, and enforce execution-level controls on what actions agents are allowed to take.

For security engineering teams, the capabilities to evaluate break down across three layers.

Discovery and inventory should go beyond knowing an agent exists. You need visibility into each agent's system prompt, connected tools, tool permissions, and accessible data sources.

LLM Runtime Protection

Runtime protection sits between the user and the model, inspecting prompts and outputs in real time. Key capabilities to look for: prompt injection detection, jailbreak blocking, PII and sensitive data detection in outputs, and policy enforcement at the inference layer.

AI Red Teaming

Look for tools that simulate adversarial inputs against your specific models and workflows, not generic test suites. Coverage should include prompt injection, model inversion, data poisoning, evasion attacks, and unsafe agent behavior.

Continuous red teaming against agent-specific vectors, including tool poisoning, indirect injection via RAG sources, and prompt injection through chained tool responses, should run automatically rather than on a schedule.

AI-SPM and Shadow AI Discovery

AI Security Posture Management tools scan your environment to build a complete inventory of AI assets: models, APIs, SDKs, agents, plugins, and third-party integrations. Shadow AI discovery specifically finds the tools your teams are using without security review.

Research consistently shows that the gap between sanctioned AI usage and actual AI usage inside enterprises is significant. Developers connect coding assistants to internal repositories, while business teams plug ChatGPT into customer workflows.

A good AI-SPM tool gives you continuous visibility. It should track new assets as they appear, identify which models have access to sensitive data, and surface risky permissions before they get exploited.

Governance and Compliance

As the EU AI Act, NIST AI RMF, and internal AI policies become real operational requirements, governance features are mandatory in security tooling.

Look for tools that provide model inventory and lineage tracking, so you always know what AI assets exist and where they came from. Audit trails on AI interactions and decisions need to be continuous, and not reconstructed after the fact.

SaaS and Cloud Integration

Look for native connectors to your cloud provider, CI/CD pipeline, SIEM, and the SaaS applications where your teams use AI day to day.

At the cloud level, look for native connections to AWS, Azure, and GCP. Most AI infrastructure, models, APIs, and agent deployments live inside these environments.

On the operations side, integrations with SIEM platforms let AI security alerts flow into the same place your team already investigates.

How to Choose the Right AI Security Tool

The right tool depends on where your biggest AI risk actually exists.

Here are the most common scenarios:

You are deploying AI agents or MCP servers in production

You are building LLM-powered applications

Your developers are using AI coding tools, and your employees are using ChatGPT, Claude, etc.

You are in a regulated industry like finance, healthcare, or insurance.

You are a large enterprise that needs coverage across the entire stack

Final Thoughts on AI Security Tools

If your organization is already running LLM applications, deploying AI agents, or letting employees use AI tools at work, the attack surface is already exposed.

Purpose-built AI security platforms now cover everything from agent discovery and runtime enforcement to red teaming and governance.

Akto is purpose-built for the agentic AI threat surface, helping enterprises secure their AI agents and MCP workflows.

Learn more about how Akto can help your organization strengthen its AI security posture.

FAQs

1. What are AI security tools?

AI security tools are software platforms built to protect AI systems you build and govern how AI is used across your organization. They cover threats like prompt injection, data leakage, shadow AI, and unsafe agent behavior that traditional security tools were not designed to catch.

2. What is the difference between AI security tools and AI-powered cybersecurity tools?

AI-powered cybersecurity tools use AI to do security work faster, like automated threat detection or alert triage. AI security tools protect AI systems themselves.

3. Do I need runtime protection or AI red teaming?

Both. Red teaming finds vulnerabilities before deployment. Runtime protection catches threats in production that pre-launch testing never surfaces.

4. How do you secure MCP servers and AI agents?

Start with discovery: map every agent, MCP server, and connected tool in your environment. Then enforce least-privilege access controls, add runtime guardrails on tool calls and outputs, and run continuous red teaming against agent-specific attacks.

5. What is AI-SPM?

The AI Security Posture Management continuously scans your environment to inventory all AI assets, flag misconfigurations, detect shadow AI, and give you a risk score across your AI footprint.

6. What is the OWASP LLM Top 10?

A standard list of the ten most critical security risks in LLM applications, published by OWASP. It includes prompt injection, insecure output handling, training data poisoning, model theft, and others.

Important Links

Experience enterprise-grade Agentic Security solution