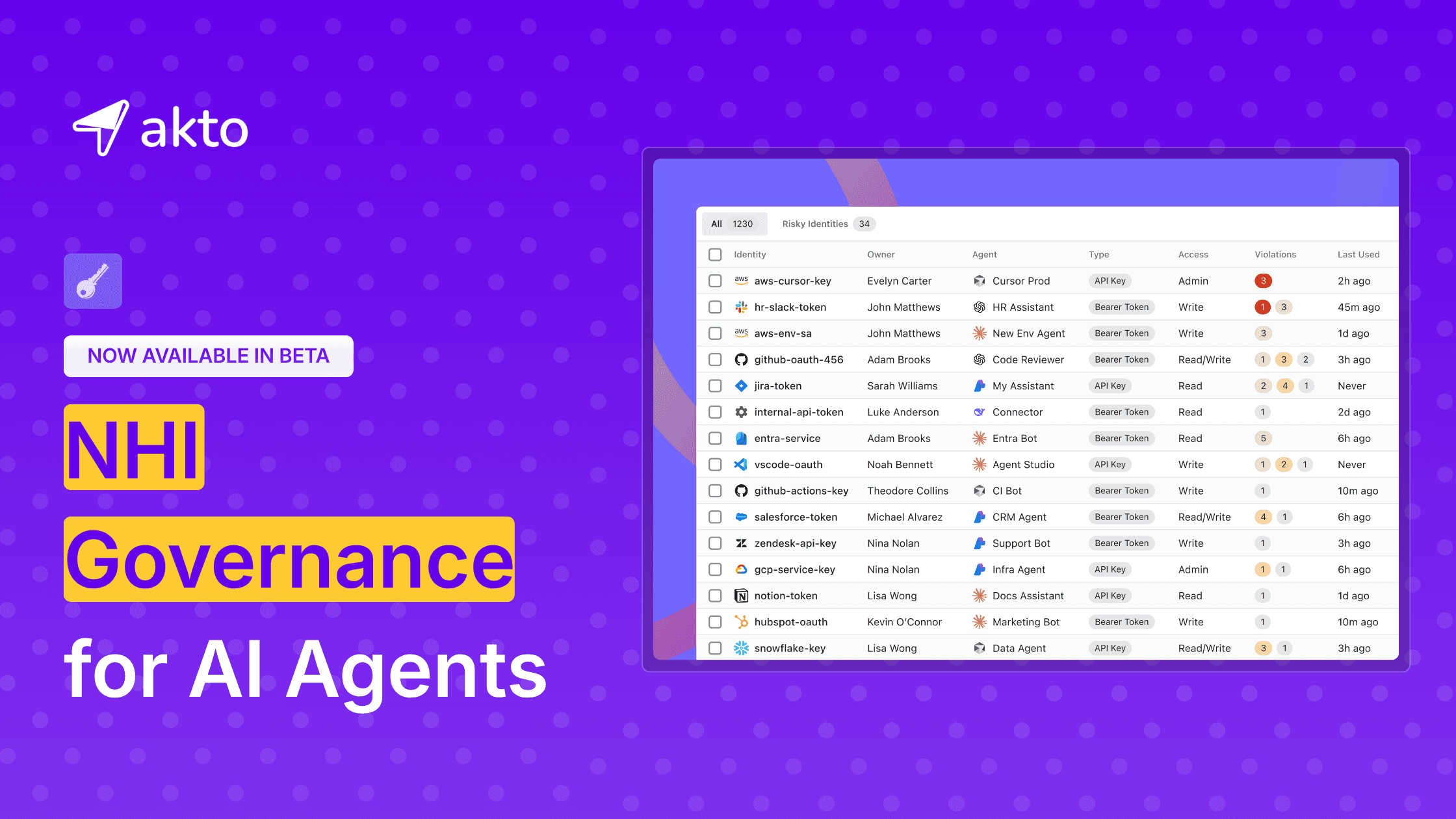

Akto launches NHI Governance for AI Agents - Now in Beta

Akto launches NHI Governance for AI Agents - discover agent credentials, enforce policies, and stop violations in real time. Now in beta.

Krishanu

AI agents are not just suggesting actions but are acting on your behalf now.

From booking travel and provisioning cloud infrastructure to closing support tickets, autonomous agents are stepping into roles that carry real-world consequences. Yet while their capabilities are advancing at breakneck speed, one critical foundation is still missing: AI agent identity.

AI agents operate under the identity of the humans or systems that created them. They reuse existing accounts, shared credentials, or API keys, making it difficult to prove who is truly responsible for an action, whether that action was authorized, or whether an agent is even legitimate.

As agentic systems scale, this gap becomes a serious risk, exposing organizations to fraud, compliance failures, and a complete breakdown of accountability.

The problem with how agents work today

Most AI agents don't have identities of their own. They run on borrowed credentials. A developer deploys Cursor against production using their personal AWS key. A team wires up an HR assistant with a shared Slack token that three humans also use. A finance automation runs against a service account that also powers two other unrelated workflows.

Traditional identity systems assumed that a human is present and is authenticating all the time. But agents operate autonomously, and that's the fundamental mismatch.

Nishith Sinha - Senior Engineering Manager - AI Security & Red Team, Databricks

Non-human identities already outnumber humans by roughly 45:1, and AI agents are multiplying them faster than IAM review cycles can keep up.

Multiply that pattern across the dozens of agents a mid-sized enterprise is now running, and three failures start to compound on each other.

Ungoverned, persistent access

Agents operate on standing credentials with no verification at the point of use. When a credential is compromised or an agent's behaviour drifts, access persists. Because these credentials were never issued with agent autonomy in mind, there is no automatic trigger to rotate them, scope them down, or retire them when the agent's task changes. Risk compounds faster than any team can detect.

Privilege escalation without review

Agents invoke tools and assume roles dynamically. Over the course of a session, an agent can accumulate privileges that no one explicitly approved, chaining one permission into another across systems that no single team has full visibility into. Traditional IAM review cycles run quarterly. Agents accumulate access in minutes.

No attribution, no audit trail

When an agent acts on behalf of a user, the audit record cannot tell them apart.

A log line that reads "Evelyn accessed production S3" could mean Evelyn accessed production S3. It could also mean an agent Evelyn deployed six months ago did, without her knowledge, using a credential she had forgotten about. When something goes wrong, there is no reliable way to separate what a person did from what an agent did on its own.

The existing toolchain, generic NHI platforms, secrets managers, and traditional IAM, weren't built for identities that make decisions. It was built for service accounts that run the same script every day.

Introducing NHI Governance for AI agents

Closing that gap takes more than a new tool. It takes a governance layer built for how agents actually get deployed, used, and audited in a real enterprise. That is what Akto NHI governance for AI Agents provides - now available in beta.

Request Early Access to NHI Governance for AI Agents here →

The identity community has begun to converge on a name for what agents actually need: blended identity. Nishit Sinha describes it as verifying two things at once - the agent's own identity, and the delegated authority of the human or system it acts for. Neither the agent nor the user's delegated credential is allowed to act alone. Databricks, Amazon, and Microsoft have all begun implementing variants of this pattern in their own AI platforms. Akto brings that same discipline to the credentials your agents already use.

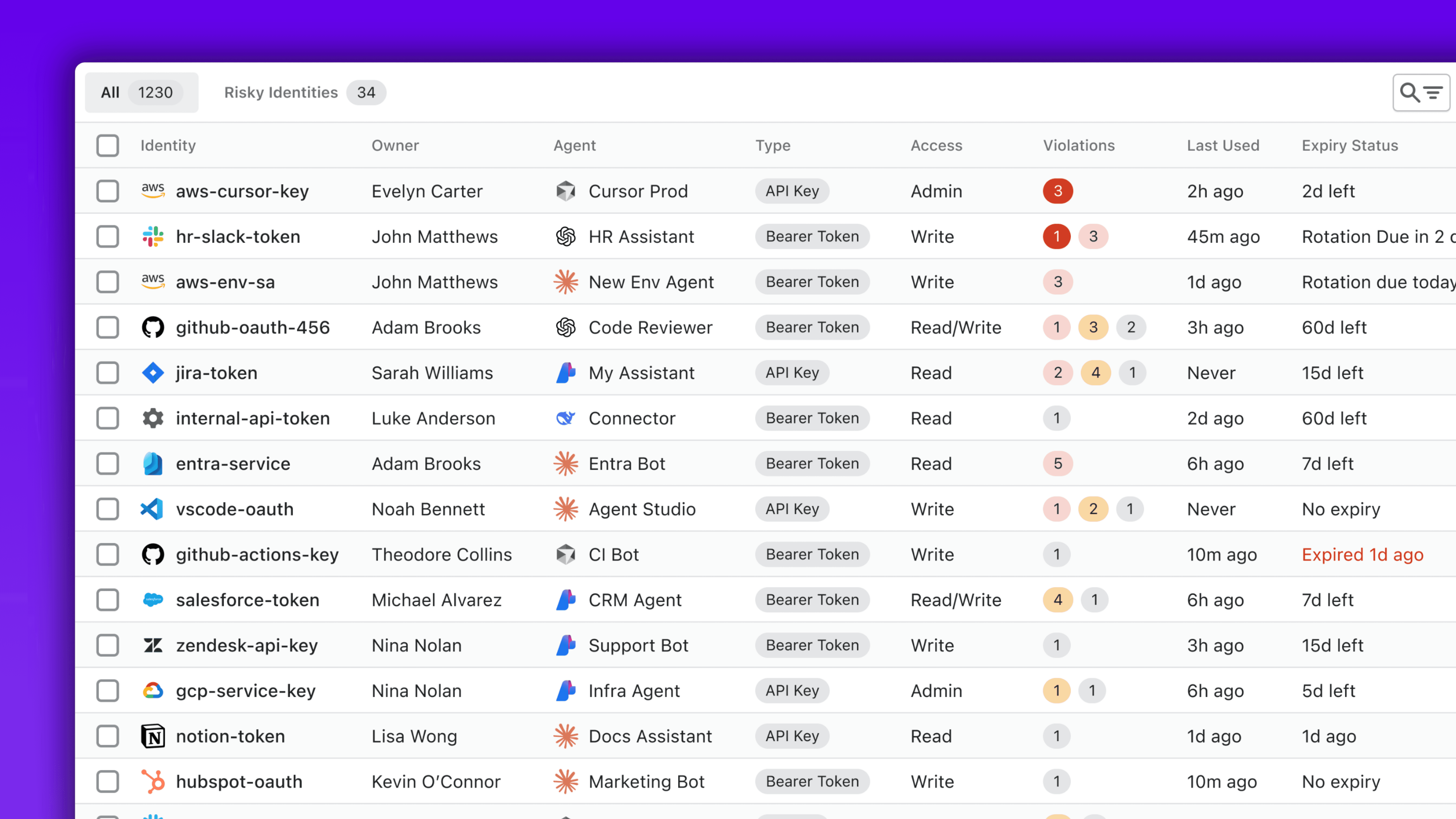

Discover every NHI tied to your agents

Akto inventories every credential an agent touches. API keys, bearer tokens, OAuth credentials, service account keys. Each one is tied back to three things that most organizations cannot connect today:

The agent using it

The human, accountable for it

Its scope of access.

Expiry, rotation, and last-used data are tracked continuously.

That means when a new agent gets deployed in your environment, it shows up in Akto with its credentials, its owner, and its blast radius surfaced automatically. When a credential goes dormant, Akto flags it. When an agent inherits a credential with more access than the task requires, Akto surfaces the mismatch.

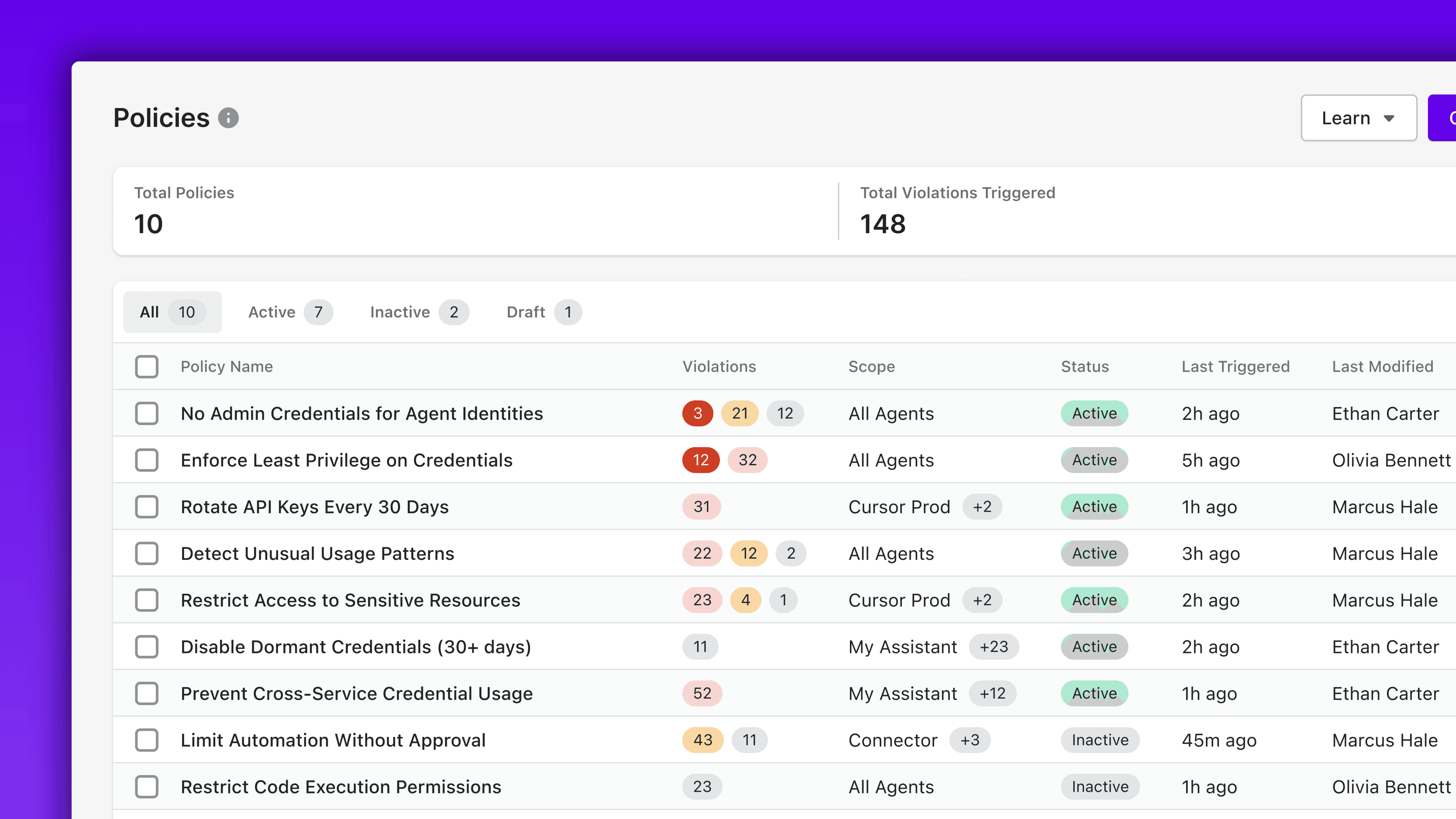

Enforce policies on every credential your agents use

Akto ships policies built specifically for the agent threat model:

No admin credentials for agent identities. Prevent cross-service credential use. Limit automation without approval. Restrict code execution permissions. Detect unusual usage patterns. These aren't generic NHI policies retrofitted for agents. They are rules that only make sense if you understand how agents actually fail.

Policies can be scoped tightly, applying only to a specific agent, or broadly, applying across every agent in the fleet. Each policy has a named owner, a lifecycle state (active, inactive, draft), and a clear record of when it last triggered. The result is a policy library that reflects how your security team actually thinks about agent risk, not a set of defaults imported from a different era of identity governance.

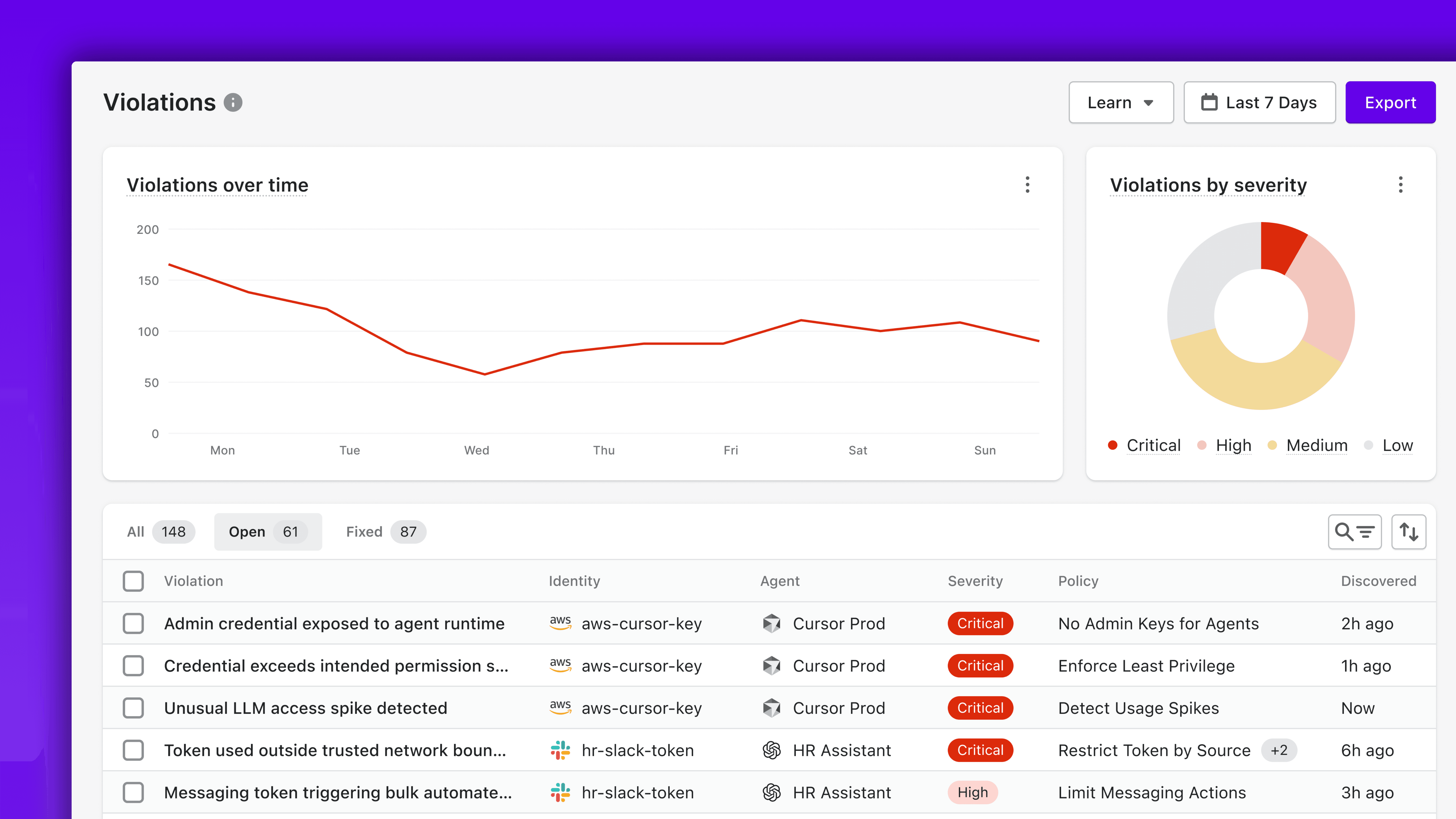

Detect and remediate policy violations in real time

When an agent breaks policy, Akto logs the violation with severity, the specific identity involved, the agent responsible, and the policy that was violated.

Every violation comes with the blast radius, what systems were affected, what data was reachable, what privilege escalations are possible, along with a clear remediation plan.

Why Akto? Agent-native matters

The category most adjacent to this, non-human identity security, was built for a different problem. Service accounts, bots, and third-party SaaS connections don't make decisions. They run fixed scripts against predictable permissions. They fail in predictable ways. Retrofitting that worldview onto agents produces tools that inventory credentials but can't tell you which agent is using them, which human is accountable for them, or whether their behaviour matches what was sanctioned.

AI agents don't follow scripts. They decide what to do next, chaining actions, making judgment calls, reaching further than any static credential was meant to. Their access patterns, blast radius, and failure modes are fundamentally different from the NHIs IAM teams have managed for the last decade. Treating them as just another kind of service account is how security programs end up with 1,230 identities, 34 of them risky, 190 with open violations, and no way to triage.

Akto NHI governance for AI Agents is agent-native from the ground up. The product is organized around the agent itself.

That agent-first architecture is what makes attribution, policy enforcement, and violation detection work the way a security team needs them to.

It also means NHI governance doesn't stand alone. It sits alongside Akto's existing capabilities for Agentic Discovery, Agentic Guardrails, and Prompt Hardening. One platform covering every surface where agents create risk, rather than a point tool that solves one slice and leaves the rest to other vendors.

NHI Governance for AI Agents is currently in beta. Connect with us to get early access.

Experience enterprise-grade Agentic Security solution