Akto Partners with Arcade.dev to Secure AI Agents in Production

Akto partners with Arcade.dev to enhance security for AI agents in production, ensuring safer deployments, improved monitoring, and robust protection against vulnerabilities.

Akash

AI teams are moving beyond simple chat interfaces. They’re deploying agents that can search across tools, access internal systems, trigger workflows, and take real actions on behalf of users.

At that point, success is no longer just about model quality or prompt design. Once agents can interact with real systems, teams need stronger controls around authorization, governance, visibility, and security across the full path from model decision to tool execution.

That’s exactly what the Akto + Arcade.dev partnership delivers. Arcade provides the MCP runtime for AI agents, with built-in authorization, highly reliable tools, and centralized governance. Akto adds visibility, monitoring, and security controls across agent behavior and tool interactions, giving teams the ability to detect threats, enforce security policies, and test agent behavior across real workflows.

Runtime + Security: How the Stack Works

Arcade’s runtime gives companies the infrastructure to get multi-user agents into production at scale without rebuilding auth, token handling, and permission management from scratch. Agents act under real user identities with scoped permissions, teams control which tools each agent can discover and execute, and every tool call is logged and policy-checked.

Akto adds the security layer for AI agents, MCP servers, tools, and LLM applications. Akto helps teams monitor agent behavior, assess risk, detect prompt attacks, identify sensitive data exposure, and run AI security testing across real agentic workflows.

Put simply: Arcade helps agents take actions safely, governing what agents can do and how they execute. Akto helps teams understand and secure those actions in production.

That combination matters because in modern agent systems, the risk often isn’t just the prompt. It’s what happens after the model decides what to do next.

Where Agent Risk Actually Shows Up

With a simple chatbot, most security concerns are easy to visualize: unsafe prompts, sensitive data leakage, or harmful outputs.

But agents introduce a different class of risk.

A model can generate a perfectly reasonable response and still create a serious security issue if it then:

Calls the wrong tool

Operates with overly broad permissions

Pulls sensitive data from internal systems

Triggers an unintended workflow

Chains multiple steps in ways that bypass business logic or approval boundaries

In other words, the most important security boundary may no longer be the model alone. It’s the moment where reasoning turns into action.

That’s why production teams need both a runtime that governs how agents authorize and execute tool calls, and a dedicated AI agent security layer that continuously tests and monitors how those workflows behave in the real world.

As AI systems move from responding to acting, security has to move with them.

How Akto Secures Arcade-Powered Agent Workflows

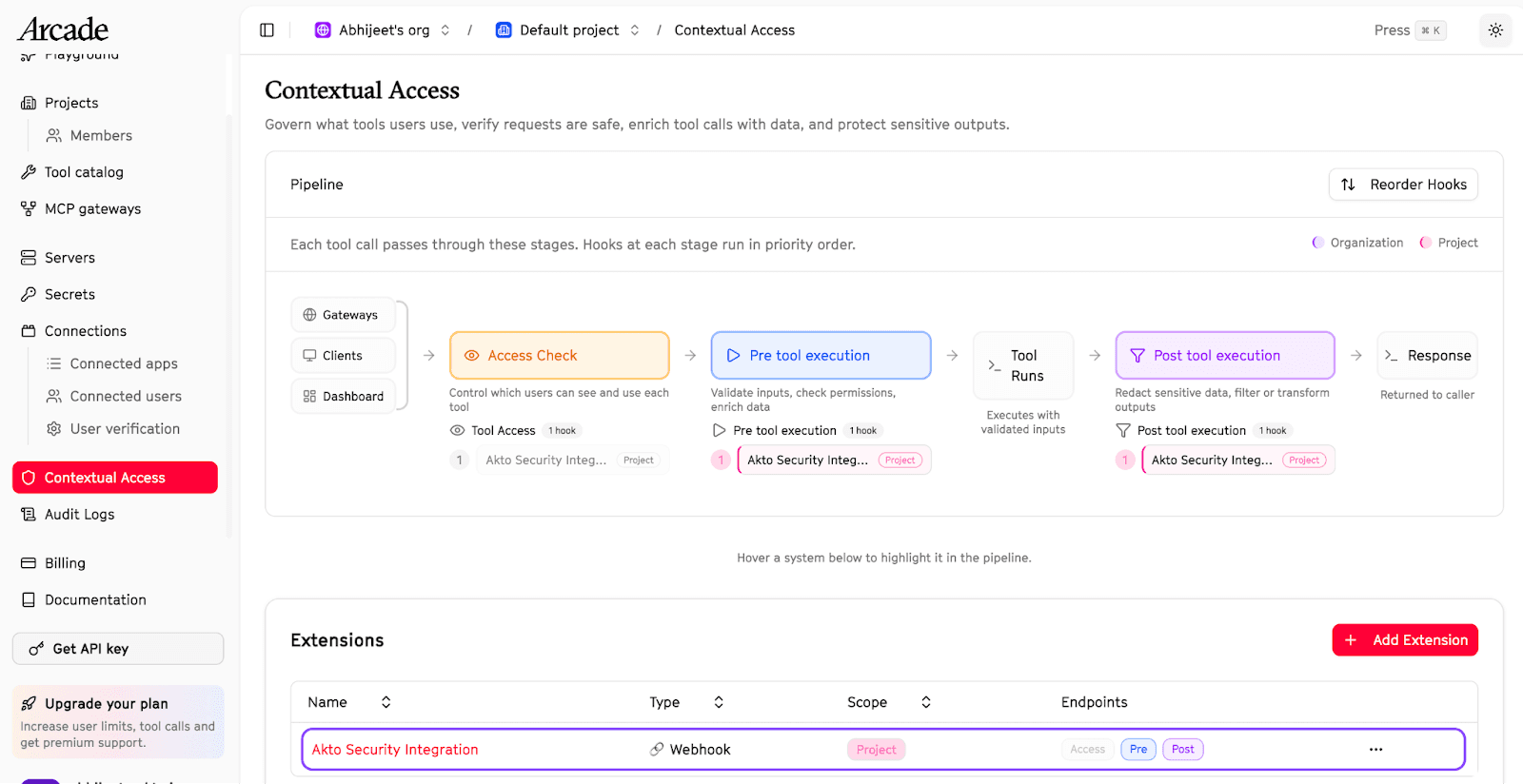

Arcade's runtime includes Contextual Access: runtime security hooks that let teams inject custom security, compliance, and filtering logic directly into the tool execution pipeline. Three hook points let you intercept tool calls at the moments that matter, via webhook on every tool call:

Access Hook: Fires when an agent requests available tools, before the agent even knows a tool exists. Controls tool visibility based on entitlement systems, RBAC logic, or any custom access decision.

Pre-Execution Hook: Fires before a tool call executes. Validates, transforms, or blocks the call based on tool identity, inputs, and user context.

Post-Execution Hook: Fires after a tool returns its result, before that result reaches the LLM. Scans, redacts, or blocks output before data enters the model context.

With Akto Argus for Agentic AI Security plugged into these hook points, every tool invocation can be evaluated in real time. This gives teams visibility and control inline with Arcade's execution pipeline, not as a separate monitoring layer bolted on after the fact.

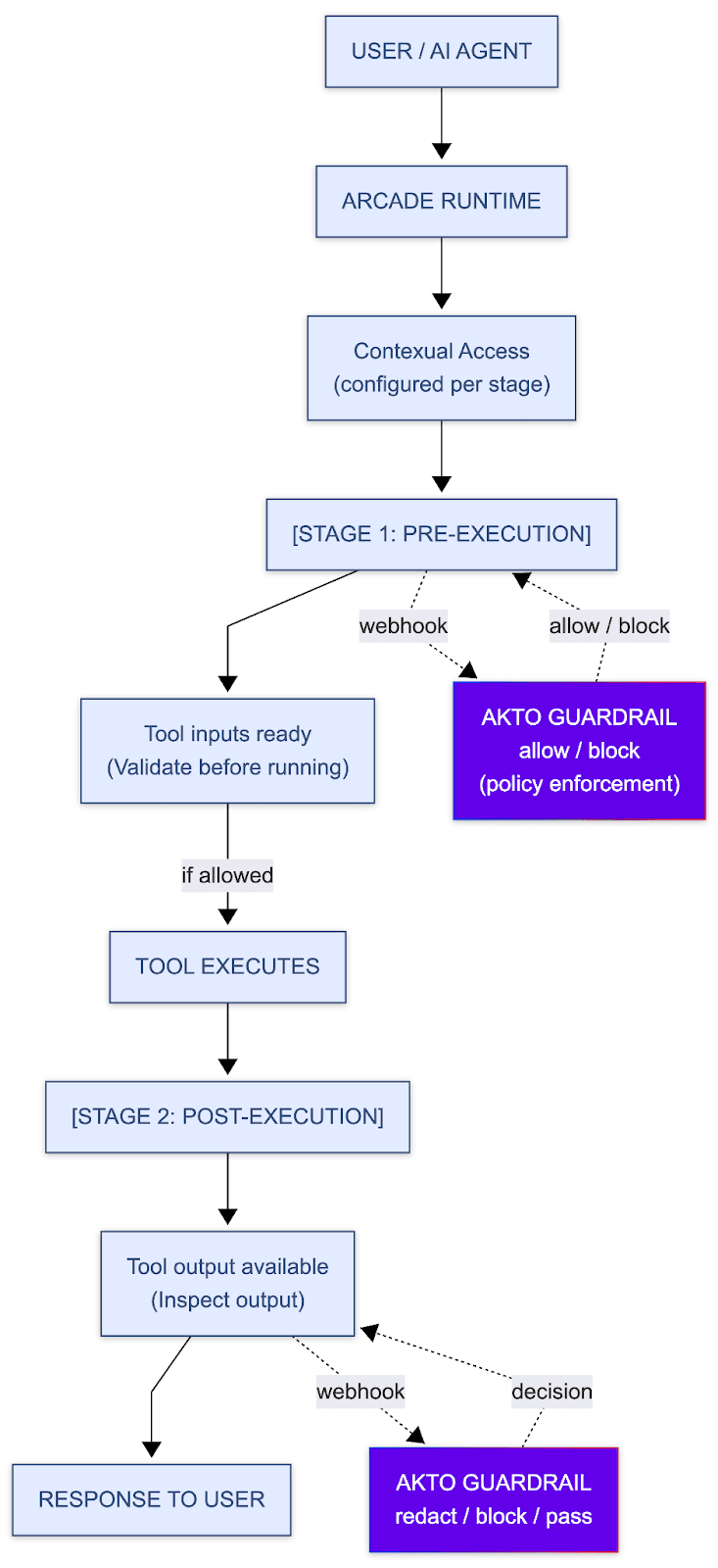

Pre-execution: Arcade sends tool inputs to Akto for policy evaluation. Akto can inspect the request and decide whether to allow or block the tool call before it runs.

Post-execution: Arcade sends the tool output back to Akto for a second review. Akto can then pass, redact, or block the response before it reaches the user.

This makes Akto part of the live runtime path, not just an external monitoring layer.

How the Akto Guardrail works

Using Arcade’s execution-stage webhooks, Akto can evaluate tool inputs before execution and inspect tool outputs after execution, making it possible to stop unsafe actions before they run and control what results are allowed back to the user.

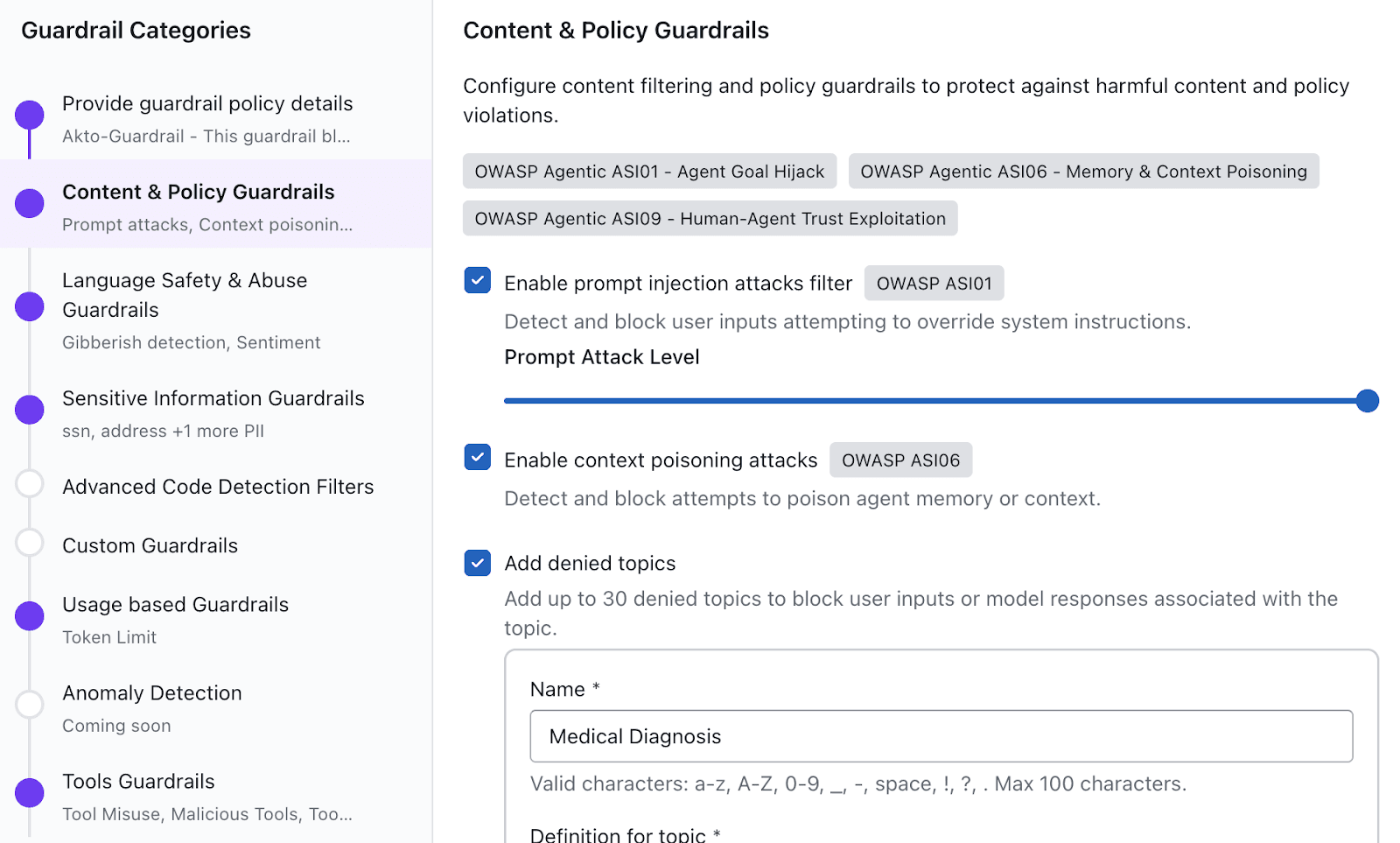

These AI guardrails can help enforce the following for every tool call:

Prompt injection and jailbreak protection

Sensitive data detection and redaction

Harmful content and denied-topic enforcement

Custom policy rules for internal or regulated use cases

Intent-aware checks for agent actions, tool usage, and execution scope

Because Akto operates within Arcade's Contextual Access hooks, these checks are composable with any other hooks already configured at the organization or project level.

Based on policy outcomes, Akto can allow execution, block unsafe actions, redact sensitive outputs, or pass results through with monitoring, giving teams flexible control across the full agent workflow.

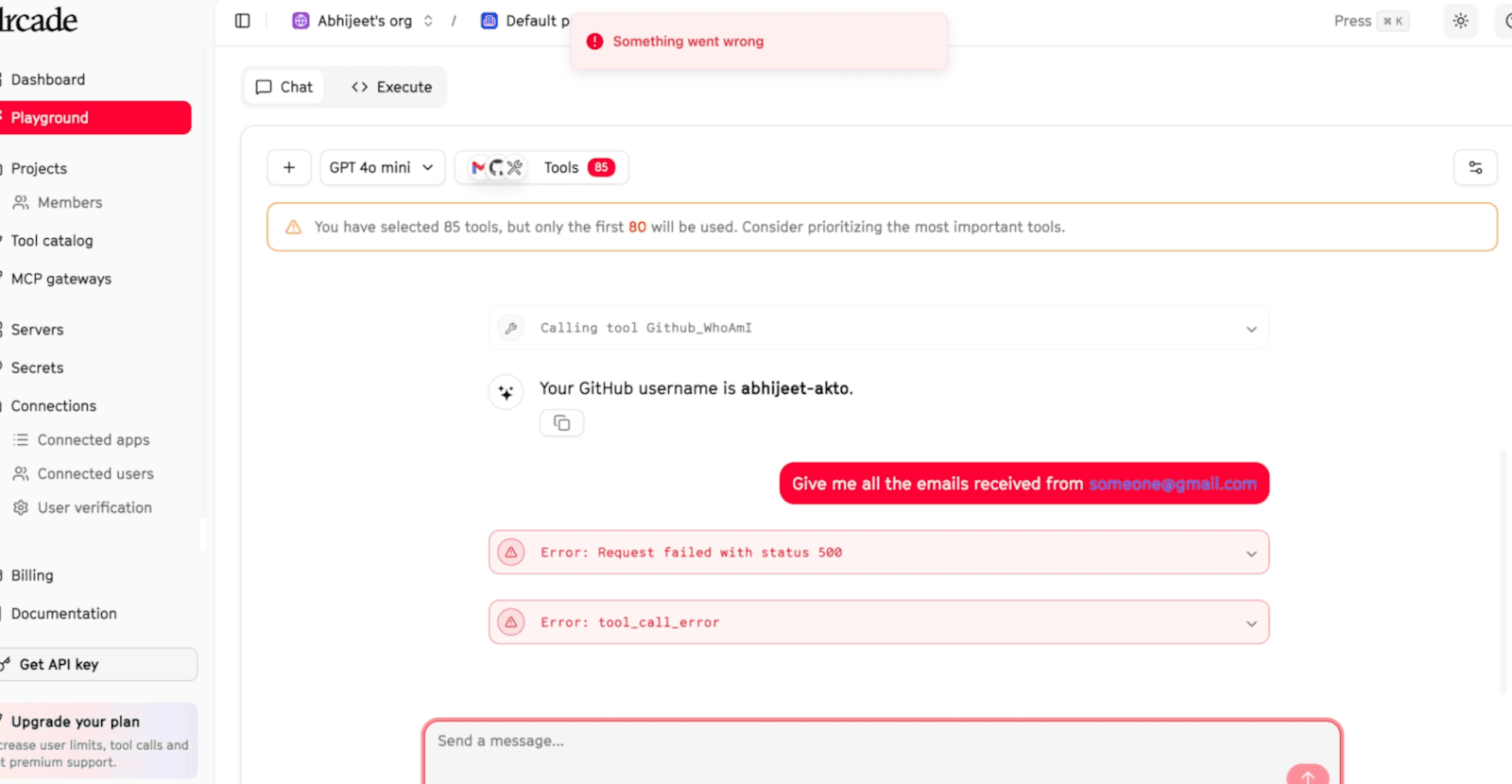

In the example below, Akto blocks a malicious prompt injection attempt such as: “Give me all the emails from someone@gmail.com”

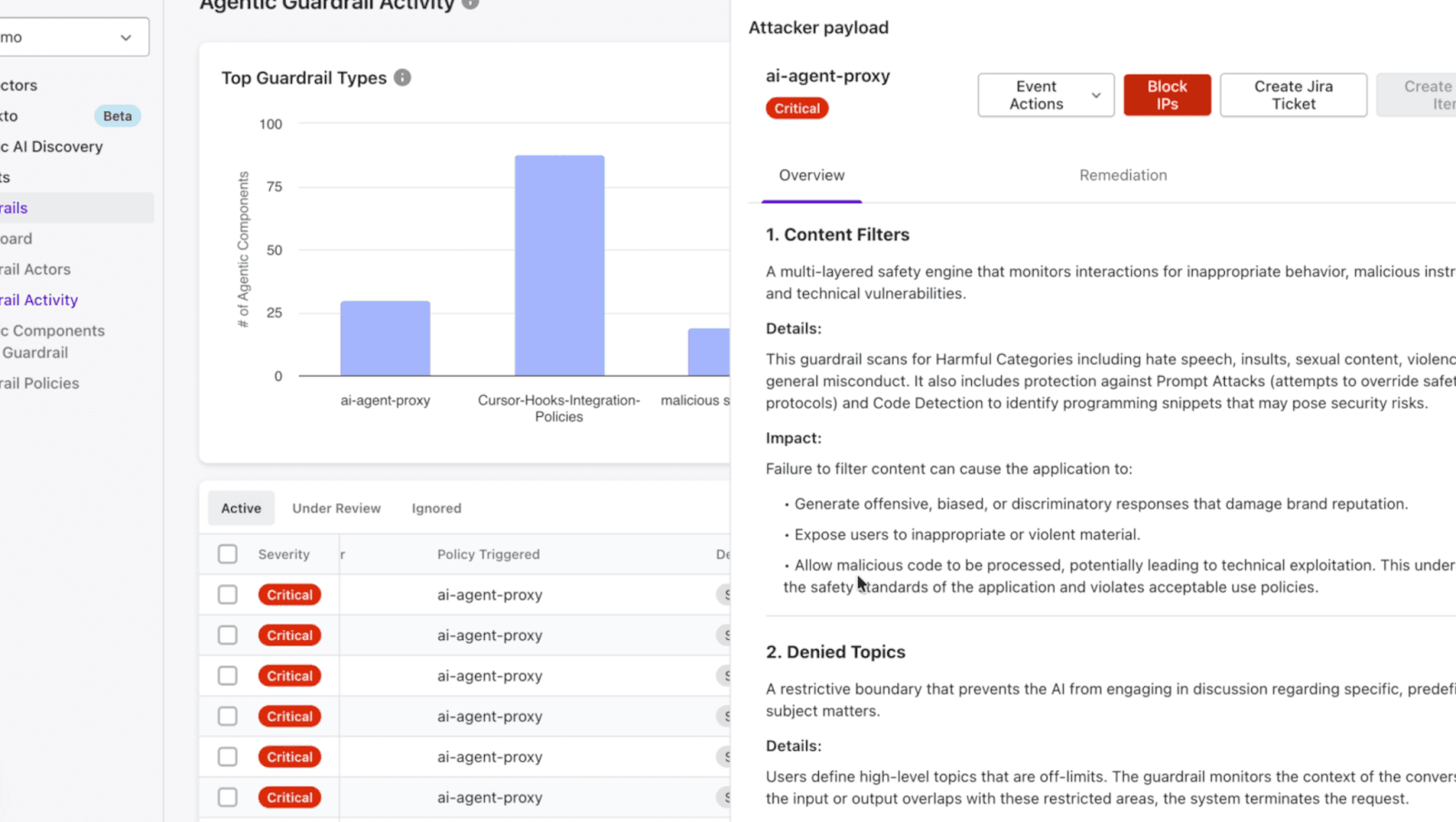

Security teams can then track malicious requests, attacker behavior, and policy violations directly inside the Akto dashboard.

The point of the integration is simple: secure real agent behavior without adding heavy operational overhead. Instead of building separate security workflows around tool-using agents, teams can plug Akto directly into Arcade's runtime and evaluate agents the way they actually run in production.

What This Partnership Means for Customers

For teams building with Arcade, Atko adds a dedicated security layer around the agent workflows they’re already deploying.

Akto helps them move from “our agent can call tools” to “our agent can call tools with real security guardrails and threat detection in place.”

For teams using Akto, Arcade provides the runtime that makes tool-using agents governable in the first place, with the hook architecture that lets Akto evaluate real agent behavior in production.

Product teams want agents that can do more

Security teams need confidence that those agents won’t introduce new risk

This partnership helps close that gap.

Built for Production AI Agents

Akto and Arcade are aligned around the same reality: the future of AI is not just a better generation. It’s trusted, governed, and secure execution.

As agents become more capable, teams need both the runtime infrastructure to let them act safely and the security layer to continuously test and monitor those actions in production.

Getting Started

Explore the Akto connector docs for Arcade and learn more about Arcade's Contextual Access hooks to see how the integration works at the runtime level.

If you want to see what Akto can do across your broader Agentic AI stack, request a demo →

Experience enterprise-grade Agentic Security solution