Akto Partners with LangChain to Bring Production-Ready Security to AI Agents

Akto partners with LangChain to deliver production-ready security for AI agents, enabling safer deployment, monitoring, and protection.

Akash

AI agents are rapidly becoming part of production software. They retrieve context, call tools, interact with APIs, and increasingly take action across real systems. As adoption grows, so does the need for security controls that can keep pace with how these agents are built and deployed.

Today, we’re excited to announce that Akto is partnering with LangChain to help organizations build and operate AI agents more securely. As part of this partnership, Akto now supports AI agents built with both LangChain and LangGraph, giving teams a stronger path to bring security visibility, testing, and runtime guardrails into their agentic AI stack.

This partnership brings together two important parts of the modern AI application lifecycle: the frameworks teams use to build agents, and the security layer needed to safely run them in production.

Bringing Together AI Agent Engineering and Security

LangChain has become one of the most widely adopted ecosystems for building agentic applications. Its open-source frameworks help teams move from experimentation to production, whether they’re building quickly with LangChain or orchestrating more durable, stateful workflows with LangGraph. Alongside those frameworks, LangSmith provides observability, evaluation, and deployment capabilities that many teams already rely on to monitor and improve agent behavior.

Akto is the security platform for AI Agents, MCPs, and LLMs built or used by teams across enterprises. Akto provides visibility, risk assessments and enforces guardrails to block prompt injection, jailbreaks, data exposure, and any malicious action. The platform gives controls to security and engineering teams over AI agents.

Together, Akto and LangChain are helping teams bridge the gap between building powerful AI agents and operating them securely at scale.

As AI Agents Gain More Autonomy, Security Has to Be Built In

AI agents introduce a different security model than traditional applications. Unlike static applications, agents can make multi-step decisions, call tools dynamically, retrieve sensitive context, and produce outputs that may trigger downstream actions. That means organizations need to think beyond performance and observability; they need ways to continuously assess risk, enforce policy, and prevent unsafe behavior at runtime.

With LangChain and LangGraph, teams get the flexibility to build and orchestrate powerful agentic workflows. With Akto, those same teams can add the security layer needed to make those workflows more production-ready.

That includes protection against risks such as:

Prompt injection and jailbreak attempts

Sensitive data exposure in prompts, tool calls, or outputs

Unsafe or unauthorized tool usage

Policy violations in internal or regulated environments

Risky or untrusted behavior across multi-step agent flows

As more organizations move from pilots to production deployments, that combination becomes increasingly important.

How Akto integrates with LangChain?

Akto supports two ways to connect with LangChain applications, depending on how teams want to introduce security into the workflow.

For teams that want inline, real-time enforcement, Akto integrates synchronously through LangChain Hooks, allowing prompts and responses to be validated directly in the execution path. For teams that want to start with visibility and monitoring first, Akto also supports an asynchronous path through LangSmith tracing, where execution traces are continuously pulled into Akto Argus for security analysis after the interaction is complete.

Together, these two integration models give teams flexibility in how they bring security into LangChain and LangGraph workflows, whether they want to enforce guardrails inline from day one or begin with trace-based visibility using the observability tooling they already have.

Inline Guardrails for LangChain and LangGraph Workflows

For teams that want inline, synchronous enforcement, Akto integrates directly into the agent workflow so security checks can happen before and after execution.

That integration is powered through pre-execution and post-execution hooks, allowing teams to apply guardrails at the moments that matter most, before an agent takes action, and again after the interaction is complete.

In simple terms, that means Akto can evaluate a request before the agent takes action, not just after something has already happened.

Using the pre-execution hook, Akto applies guardrail checks before the LLM, tool, or MCP call is allowed to run. That can include validating for prompt injection, PII or sensitive data exposure, and policy violations. If a request is unsafe, Akto can stop it before it reaches execution. If it passes, the workflow continues normally.

After the action completes, the post-execution hook captures the interaction and sends the trace into Akto Argus. From there, teams gain the visibility and security layer needed for continuous testing, auditability, and deeper analysis across agent behavior in production.

This gives teams a practical way to add real-time guardrails inline with agent execution, while also preserving the broader visibility and security workflows they need after the interaction is complete.

That means engineering and security teams can layer protection on top of existing agent workflows without introducing a separate development path or requiring teams to re-architect how their agents are built.

LangSmith tracing for visibility-first security workflows

For teams that want a more lightweight, asynchronous path, Akto also supports LangSmith tracing as a way to bring existing agent traces into Akto Argus without changing application code.

With the LangSmith Connector, Akto uses a cron-based connector that periodically pulls execution traces from LangSmith and ingests them into Akto Argus. This allows teams to use their existing LangSmith instrumentation as the source of runtime trace data while extending that visibility into Akto for security monitoring and analysis.

In practice, Akto continuously collects:

LangChain application metadata

Recent execution traces

Associated input and output data from those traces

This makes the LangSmith integration a practical option for teams that want to begin with trace-based visibility into how agents behave in production, understand what prompts and responses are flowing through the system, and use those traces as part of broader security review and investigation workflows.

Akto ingests LangSmith traces to capture real execution flow, including prompts, tool calls, and model responses.

That trace data is mapped into Akto to give security teams clear visibility into agent requests, responses, and behavior in one place.

From observability to Security: why this matters in Production

Tracing and observability are essential for understanding how an AI agent behaves. But in production, organizations also need to answer a different question: was that behavior safe?

That’s where Akto adds value on top of the LangChain development and LangSmith observability.

With Akto Argus, teams can move from simply monitoring agent execution to actively testing and protecting it through three core layers:

Runtime visibility into what’s actually running – discover AI agents, MCP servers, and GenAI applications in production so teams can understand what exists, where it’s running, and how it behaves.

Continuous risk assessment as systems change – evaluate agent behavior as prompts evolve, tools change, and workflows shift, so security posture reflects current runtime behavior rather than a point-in-time snapshot.

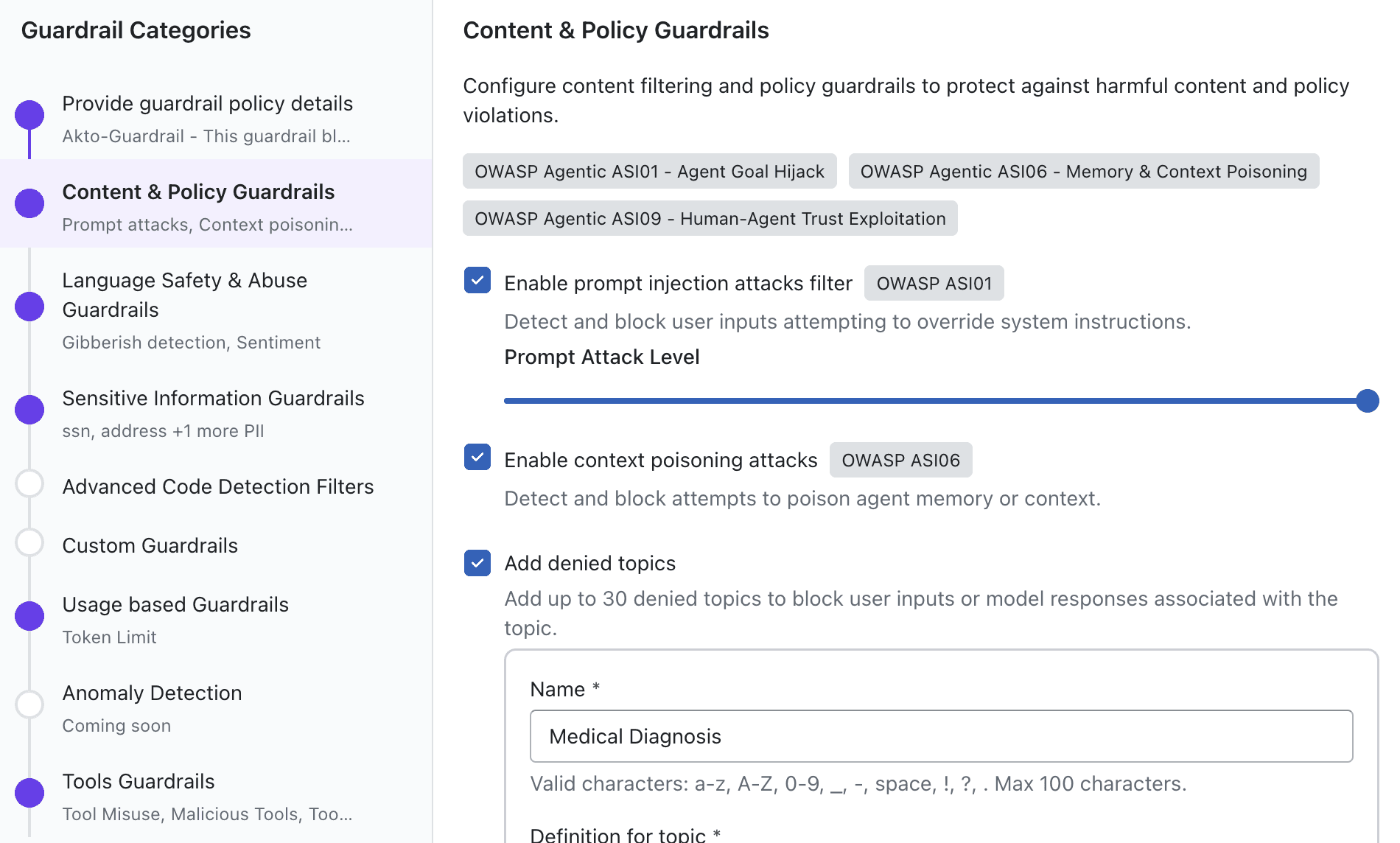

Runtime guardrails that enforce what agents can and cannot do – define and enforce policies during execution to control what actions an agent is allowed to take, what data it can access or expose, which tools or MCPs it can invoke, and what topics or outputs should be restricted.

This is where Akto guardrails become especially important. They’re enforced while the agent is operating in production, not after something has already gone wrong. Instead of simply alerting on risky behavior, guardrails help teams apply real-time controls across the agent lifecycle, from prompt handling to tool execution to final response delivery.

That can include controls such as:

Blocking prompt injection and jailbreak attempts

Preventing sensitive data exposure or unauthorized data access

Restricting unsafe tool execution or MCP invocation

Enforcing denied topics, restricted outputs, or harmful content policies

Applying runtime policy decisions before risky actions are completed

Maintaining auditability and governance across agent behavior in production

This matters because AI agent security is not just about spotting suspicious behavior. It’s about giving teams the ability to govern autonomous systems in real time, before unsafe actions, data leakage, or policy violations turn into production incidents.

Built for the Teams Responsible for Shipping and Securing AI Agents

This partnership is designed to support the full set of teams involved in enterprise AI adoption.

AI engineers and developers can keep building in the LangChain ecosystem they already know

Platform teams can standardize how agent workflows are monitored and secured

Security teams gain better visibility, testing, and runtime enforcement for AI-specific risks

Leadership teams get a more practical path from experimentation to governed production deployment

Instead of treating AI agents as isolated experiments or black-box systems, organizations can bring them into a more mature operational model, one that balances speed, flexibility, and security.

A stronger path to trusted AI agents

If your team is already building with LangChain or LangGraph and LangSmith, you can now bring those applications into Akto Argus and start adding security visibility, testing, and runtime guardrails to your AI agent stack.

Explore the integrations:

Want to see what Akto can do across your full AI stack? Request a demo →

Experience enterprise-grade Agentic Security solution